Peter Singer — utilitarian extraordinaire , spokesman for involuntary euthanasia, and advocate of infanticide — recently shared with millions of rapt readers his opinions about why and how health care must be rationed: “Why We Must Ration Health Care,” The New York Times Magazine, July 15, 2009. Given Singer’s penchant for playing God, the “we” of his title could be an imperial one, but — in this instance — it is an authoritarian one.

Singer is among the many “public intellectuals” (some of them Nobelists) who believe in an omniscient, infallible government, provided — of course — that it does things unto the rest of us the way that they (the “intellectuals”) would have them done. And, like most of those “intellectuals,” Singer is dead wrong in his assertions about how to “solve” the “health care problem,” because his underlying premises and “logic” are dead wrong.

I begin with Singer’s central thesis:

Health care is a scarce resource, and all scarce resources are rationed in one way or another. In the United States, most health care is privately financed, and so most rationing is by price: you get what you, or your employer, can afford to insure you for.

Those two sentences are replete with inaccuracy and error:

- There is no such thing as “health care”; the term is a catch-all for a wide variety of goods and services, ranging from the self-administration of generic aspirin to complex, delicate neurological surgery.

- In any event, “health care” is not a “resource,” its various forms are economic goods (i.e., products and services), the production of which requires the use of resources (e.g., the time of trained nurses and doctors, the raw materials and production facilities used in drug manufacture).

- Economic goods are not rationed by price; price facilitates voluntary transactions between willing buyers and sellers in free markets. Rationing is what happens when a powerful authority (usually a government) steps in to dictate the organization markets, the specifications of goods, and — more extremely — who may but what goods and at what prices (though dictated prices are essentially meaningless because they do not perform the signaling function that they do in free markets).

- Much “health care” in the United States is privately financed, to the extent that most Americans buy and self-administer products like aspirin, antihistamines, cough medicine, band-aids, etc., and some (though relatively few) Americans buy medical products and services without the benefit of insurance. But much “health care” is not privately financed, because — as Singer soon notes — there is a substantial taxpayer subsidy for employer-sponsored insurance programs. There are various other taxpayer subsidies and government restraints (e.g., Medicare, Medicaid, government-funded research of diseases and medicines, FDA approval of most kinds of medications and personal-care products).

The slipperiest of Singer’s facile statements is his characterization of what happens in free markets as “rationing,” thus lending back-handed legitimacy to true rationing, which is brute-force interference by government in what is really a personal responsibility: caring for one’s health. For it has somehow come to be common currency that “health care” is a “right,” something that government ought to do for us, instead of something that we ought to do for ourselves. (After all, we do live in an age of “positive rights,” which come at a high cost to everyone, including those who seek them.)

Most Americans are, however, enmeshed in a Catch-22 situation. They have less money to provide for themselves because it has been taken from them by government, to provide for others. But Singer deems the provision inadequate:

In the public sector, primarily Medicare, Medicaid and hospital emergency rooms, health care is rationed by long waits, high patient copayment requirements, low payments to doctors that discourage some from serving public patients and limits on payments to hospitals.

Singer’s “solution” is to make things worse:

The case for explicit health care rationing in the United States starts with the difficulty of thinking of any other way in which we can continue to provide adequate health care to people on Medicaid and Medicare, let alone extend coverage to those who do not now have it.

How will outright rationing entice doctors and hospitals to provide services that they are now unwilling to provide? If doctors leave the medical profession, and new doctors enter at reduced rates, what would Singer do? Begin drafting students into medical schools? What about hospitals that refuse to conform? Would they be nationalized, along with their nurses, orderlies, etc.?

What a pretty picture: Soviet-style medicine here in the U.S. of A. Yet that it precisely where outright rationing will lead if the politburo in Washington sees a shrinking supply of doctors, hospitals, and other medical providers — as it will. Most politicians do not know how to do less. When they create a mess, their natural inclination is to do more of what they did to cause the mess in the first place.

Singer, naturally, appeals to the authority of just such a politician:

President Obama has said plainly that America’s health care system is broken. It is, he has said, by far the most significant driver of America’s long-term debt and deficits. It is hard to see how the nation as a whole can remain competitive if in 26 years we are spending nearly a third of what we earn on health care, while other industrialized nations are spending far less but achieving health outcomes as good as, or better than, ours.

Well, if BO says it, it must be true, n’est-ce pas? The “system” is broken because government established Medicare and Medicaid, back in the days of LBJ’s “Great Society.” Those two programs have “only” four fatal flaws:

- They take money from taxpayers, who therefore are less able to provide for themselves.

- They grant beneficiaries “free” or low-cost access to medical services, thus bloating the demand for those services and causing their prices to rise. (The subsidy of employer-sponsored health insurances has the same effect.)

- They involve promises of access to medical services that cannot be redeemed by the paltry Medicare tax rate — thus the prospect of balooning deficits, leading to (a) higher interest rates and/or (b) higher taxes on (you guessed it) “the rich.”

- “The rich,” who finance economic growth, will flee these shore (or their money will), and the deficits will grow larger as tax revenues fall.

Another natural inclination of politicians is to deplore the messes caused by other politicians, and then to do something to make the messes worse. In this instance, BO itches to trump LBJ.

But, of course, this time will be different — it will be done right:

Rationing health care means getting value for the billions we are spending by setting limits on which treatments should be paid for from the public purse. If we ration we won’t be writing blank checks to pharmaceutical companies for their patented drugs, nor paying for whatever procedures doctors choose to recommend. When public funds subsidize health care or provide it directly, it is crazy not to try to get value for money. The debate over health care reform in the United States should start from the premise that some form of health care rationing is both inescapable and desirable. Then we can ask, What is the best way to do it?

So, instead of insurance companies — which at least compete with each other to offer subscribers affordable and attractive lineups of providers and drug formularies — our choices will be dictated by all-wise bureaucrats. Lovely!

Singer defends the bureaucrats, as long as they do it his way, of course. He begins with NICE:

…Britain’s National Institute for Health and Clinical Excellence…. generally known as NICE, is a government-financed but independently run organization set up to provide national guidance on promoting good health and treating illness…. NICE had set a general limit of £30,000, or about $49,000, on the cost of extending life for a year….

There’s no doubt that it’s tough — politically, emotionally and ethically — to make a decision that means that someone will die sooner than they would have if the decision had gone the other way….

Governments implicitly place a dollar value on a human life when they decide how much is to be spent on health care programs and how much on other public goods that are not directed toward saving lives. The task of health care bureaucrats is then to get the best value for the resources they have been allocated….

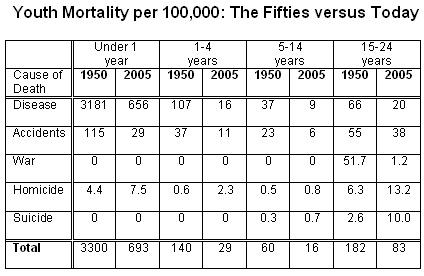

As a first take, we might say that the good achieved by health care is the number of lives saved. But that is too crude. The death of a teenager is a greater tragedy than the death of an 85-year-old, and this should be reflected in our priorities. We can accommodate that difference by calculating the number of life-years saved, rather than simply the number of lives saved. If a teenager can be expected to live another 70 years, saving her life counts as a gain of 70 life-years, whereas if a person of 85 can be expected to live another 5 years, then saving the 85-year-old will count as a gain of only 5 life-years. That suggests that saving one teenager is equivalent to saving 14 85-year-olds. These are, of course, generic teenagers and generic 85-year-olds….

Health care does more than save lives: it also reduces pain and suffering. How can we compare saving a person’s life with, say, making it possible for someone who was confined to bed to return to an active life? We can elicit people’s values on that too. One common method is to describe medical conditions to people — let’s say being a quadriplegic — and tell them that they can choose between 10 years in that condition or some smaller number of years without it. If most would prefer, say, 10 years as a quadriplegic to 4 years of nondisabled life, but would choose 6 years of nondisabled life over 10 with quadriplegia, but have difficulty deciding between 5 years of nondisabled life or 10 years with quadriplegia, then they are, in effect, assessing life with quadriplegia as half as good as nondisabled life. (These are hypothetical figures, chosen to keep the math simple, and not based on any actual surveys.) If that judgment represents a rough average across the population, we might conclude that restoring to nondisabled life two people who would otherwise be quadriplegics is equivalent in value to saving the life of one person, provided the life expectancies of all involved are similar.

This is the basis of the quality-adjusted life-year, or QALY, a unit designed to enable us to compare the benefits achieved by different forms of health care. The QALY has been used by economists working in health care for more than 30 years to compare the cost-effectiveness of a wide variety of medical procedures and, in some countries, as part of the process of deciding which medical treatments will be paid for with public money. If a reformed U.S. health care system explicitly accepted rationing, as I have argued it should, QALYs could play a similar role in the U.S.

Here we have utilitarianism rampant on a field of fascism. Given that (in Singer’s mind) “we” must nationalize medicine (i.e., ration “health care”), “we” must do it right. To do it right, “we” must weigh human life on a scale of Singer’s devising, and not on the scale of our individual preferences. For Singer knows all! And government knows all, as long as it operates according Singer’s calculus of deservingness.

And why must “we” ration health care? Singer invokes a familiar statistic:

In the U.S., some 45 million do not [have health insurance], and nor are they entitled to any health care at all, unless they can get themselves to an emergency room.

Who are those legendary 45 (or 47) million persons? Are they entirely bereft of medical attention? Here are some answers to those questions, from June and Dave O’Neil’s “Who are the Uninsured? An Analysis of America’s Uninsured Population, Their Characteristics and Their Health“:

Each year the Census Bureau reports its estimate of the total number of adults and children in the U.S. who lacked health insurance coverage during the previous calendar year. The number of Americans reported as uninsured in 2006 was 47 million, which was close to 16 percent of the U.S. population… This number has come to have a large impact on the debate over healthcare reform in the United States. However, there is a great deal of confusion about the significance of the uninsured numbers.

Many people believe that the number of uninsured signifies that almost 50 million Americans are without healthcare simply because they cannot afford a health insurance policy and as a consequence, suffer from poor health, and premature death. However this line of reasoning is based on a distorted characterization of the facts….

More careful analysis of the statistics on the uninsured shows that many uninsured individuals and families appear to have enough disposable income to purchase health insurance, yet choose not to do so, and instead self-insure. We call this group the “voluntarily uninsured” and find that they account for 43 percent of the uninsured population. The remaining group—the “involuntarily uninsured”—makes up only 57 percent of the Census count of the uninsured. A second important point is that while the uninsured receive fewer medical services than those with private insurance, they nonetheless receive significant amounts of healthcare from a variety of sources—government programs, private charitable groups, care donated by physicians and hospitals, and care paid for by out-of-pocket expenditures. Third, although the involuntarily uninsured by some estimates appear to have a significantly shorter life expectancy than those who are privately insured or voluntarily uninsured, it is difficult to establish cause and effect. We find that differences in mortality according to insurance status are to a large extent explained by factors other than health insurance coverage—such as education, socioeconomic status, and health-related habits like smoking…..

The results [of a regression analysis] vividly show the importance of controlling for characteristics that are strongly related to health status and health outcomes and are also strongly related to insurance status. The unadjusted gross difference in mortality risk between those with private insurance and the involuntarily uninsured was -0.113 or 11 percentage points. After adding to the model all characteristics, including the variable indicating fair/poor health status (M3), we find that the differential in the mortality risk between those with private insurance and those who are involuntarily uninsured is reduced to -0.029, a 2.9 percentage point difference.

The unadjusted differential between the privately insured and the voluntarily uninsured … was small—only 3.3 percentage points—because the characteristics of the two groups are fairly similar. That differential becomes even smaller after controlling for measurable differences in characteristics. Thus … the mortality rate of the voluntarily uninsured is only 1.7 percentage points below that of the privately insured….

In summary, we find as have others, that lack of health insurance is not likely to be the major factor causing higher mortality rates among the uninsured. The uninsured—particularly the involuntarily uninsured—have multiple

disadvantages that in themselves are associated with poor health.

(See also The Henry J. Kaiser Family Foundation’s The Uninsured: A Primer, Supplemental Data Tables, October 2008.)

In summary, the so-called crisis in “health care” is a figment of fevered imaginations. To the extent that medical care and medications are more costly than they “should” be, it is because of government interference: restrictions on the entry of doctors and other providers (thanks to the lobbying efforts of the AMA — the doctors’ “union” — and similar organizations; long and often deadly FDA approval procedures for new drugs; subsidies for employer-provided health insurance; and the establishment of Medicare and Medicaid.

The obvious solution to the “crisis” — obvious to anyone who isn’t wedded to the religion of big government — is to get government out of medicine. But that won’t happen because the “crisis” is yet another excuse for politicians and pundits (like Singer, and worse) to dictate the terms and conditions of our lives. Unfortunately, too many voters are susceptible to the siren call of government action. Such voters are more than ready to elect politicians who promise to “do something” about trumped-up crises — be they crises of “health care,” “global warming,”

What will happen with the current “crisis”? The result will be something less destructive than BO’s preferred result, which would effectively nationalize medicine in the United States by making all providers and drug companies beholden to a single payer (i.e., government) and leveling the quality of medical care to a mediocre standard through mandatory participation in the nationalized scheme. But the result, whatever it is, will be destructive:

- Many providers will quit providing, and fewer new providers will replace them, unless they are enticed by tax-funded subsidies.

- Drug companies will develop fewer new drugs, unless they are co-opted by tax-funded subsidies.

- “The rich” will be forced to bear a disproportionate share of the cost of making things worse. And so, “the rich” will have less wherewithal with which to stimulate economic growth, and less inclinations to do so (in the United States, at least).

Politicians being politicians, the resulting mess will have only one obvious solution: outright nationalization of medicine in the U.S. (The politburo, of course, will enjoy a separate and distinctly superior brand of taxpayer-funded medical care.)

And then we will have become thoroughly European.