This is the first post in a series. It will leave you hanging. But despair not, the series will come to a point — eventually. In the meantime, enjoy the ride.

Before we can consider time and existence, we must consider whether they are illusions.

Regarding time, there’s a reasonable view that nothing exists but the present — the now — or, rather, an infinite number of nows. In the conventional view, one now succeeds another, which creates the illusion of the passage of time. In the view of some physicists, however, all nows exist at once, and we merely perceive sequential slice of the all nows. Inasmuch as there seems to be general agreement as to the contents of the slice, the only evidence that many nows exist in parallel are claims about such phenomena as clairvoyance, visions, and co-location. I won’t wander into that thicket.

A problem with the conventional view of time is that not everyone perceives the same now at the same time. Well, not according to Einstein’s special theory of relativity, at least. A problem with the view that all nows exist at once (known as the many-worlds view), is that it’s purely a mathematical concoction. Unless you’re a clairvoyant, visionary, or the like.

Oh, wait, the special theory of relativity is also a mathematical concoction. Further it doesn’t really show that not everyone perceives the same now at the same time. The key to special relativity – the Lorentz transformation — enables one to reconcile the various nows; that is, to be a kind of omniscient observer. So, in effect, there really is a now.

This leads to the question of what distinguishes one now from another now. The answer is change. If things didn’t change, there would be only a now, not an infinite series of them. More precisely, if things didn’t seem to change, time would seem to stand still. This is another way of saying that a succession of nows creates the illusion of the passage of time.

What happens between one now and the next now? Change, not the passage of time. What we think of as the passage of time is really an artifact of change.

Time is really nothing more than the counting of events that supposedly occur at set intervals — the “ticking” of an atomic clock, for example. I say supposedly because there’s no absolute measure of time against which one can calibrate the “ticking” of an atomic clock, or any other kind of clock.

In summary: Clocks don’t measure time. Clocks merely change (“tick”) at supposedly regular intervals, and those intervals are used in the representation of other things, such as the speed of an automobile or the duration of a 100-yard dash.

Time is an illusion. Or, if that conclusion bothers you, let’s just say that time is an ephemeral quality that depends on change.

Change is real. But change in what — of what does reality consist?

There are two basic views of reality. One of them, posited by Bishop Berkeley and his followers, is that the only reality is that which goes on in one’s own mind. But that’s just another way of saying that humans don’t perceive the external world directly. Rather, it is perceived second-hand, through the senses that detect external phenomena and transmit signals to the brain, which is where “reality” is formed.

There is an extreme version of the Berkeleyan view: Everything perceived is only a kind of dream or illusion. But even a dream or illusion is something, not nothing, so there is some kind of existence.

The sensible view, held by most humans (even most scientists), is that there is an objective reality out there, beyond the confines one’s mind. How can so many people agree about the existence of certain things (e.g., Cleveland) unless there’s something out there?

Over the ages, scientists have been able to describe objective reality in ever-minute detail. But what is it? What is the stuff of which it consists? No one knows or is likely ever to know. All we know is that stuff changes, and those changes give rise to what we call time.

The big question is how things came to exist. This has been debated for millennia. There are two schools of thought:

Things just exist and have always existed.

Things can’t come into existence on their own, so some non-thing must have caused things to exist.

The second option leaves open the question of how the non-thing came into existence, and can be interpreted as a variant of the first option; that is, some non-thing just exists and has always existed.

How can the big question be resolved? It can’t be resolved by facts or logic. If it could be, there would be wide agreement about the answer. (Not perfect agreement because a lot of human beings are impervious to facts and logic.) But there isn’t and never will be wide agreement.

Why is that? Can’t scientists someday trace the existence of things – call it the universe – back to a source? Isn’t that what the Big Bang Theory is all about? No and no. If the universe has always existed, there’s no source to be tracked down. And if the universe was created by a non-thing, how can scientists detect the non-thing if they’re only equipped to deal with things?

The Big Bang Theory posits a definite beginning, at a more or less definite point in time. But even if the theory is correct, it doesn’t tell us how that beginning began. Did things start from scratch, and if they did, what caused them to do so? And maybe they didn’t; maybe the Big Bang was just the result of the collapse of a previous universe, which was the result of a previous one, etc., etc., etc., ad infinitum.

Some scientists who think about such things (most of them, I suspect) don’t believe that the universe was created by a non-thing. But they don’t believe it because they don’t want to believe it. The much smaller number of similar scientists who believe that the universe was created by a non-thing hold that belief because they want to hold it.

That’s life in the world of science, just as it is in the world of non-science, where believers, non-believers, and those who can’t make up their minds find all kinds of ways in which to rationalize what they believe (or don’t believe), even though they know less than scientists do about the universe.

Let’s just accept that and move on to another big question: What is it that exists? It’s not “stuff” as we usually think of it – like mud or sand or water droplets. It’s not even atoms and their constituent particles. Those are just convenient abstractions for what seem to be various manifestations of electromagnetic forces, or emanations thereof, such as light.

But what are electromagnetic forces? And what does their behavior (to be anthropomorphic about it) have to do with the way that the things like planets, stars, and galaxies move in relation to one another? There are lots of theories, but none of them has as yet gained wide acceptance by scientists. And even if one theory does gain wide acceptance, there’s no telling how long before it’s supplanted by a new theory.

That’s the thing about science: It’s a process, not a particular result. Human understanding of the universe offers a good example. Here’s a short list of beliefs about the universe that were considered true by scientists, and then rejected:

Thales (c. 620 – c. 530 BC): The Earth rests on water.

Aneximenes (c. 540 – c. 475 BC): Everything is made of air.

Heraclitus (c. 540 – c. 450 BC): All is fire.

Empodecles (c. 493 – c. 435 BC): There are four elements: earth, air, fire, and water.

Democritus (c. 460 – c. 370 BC): Atoms (basic elements of nature) come in an infinite variety of shapes and sizes.

Aristotle (384 – 322 BC): Heavy objects must fall faster than light ones. The universe is a series of crystalline spheres that carry the sun, moon, planets, and stars around Earth.

Ptolemey (90 – 168 AD): Ditto the Earth-centric universe, with a mathematical description.

Copernicus (1473 – 1543): The planets revolve around the sun in perfectly circular orbits.

Brahe (1546 – 1601): The planets revolve around the sun, but the sun and moon revolve around Earth.

Kepler (1573 – 1630): The planets revolve around the sun in elliptical orbits, and their trajectory is governed by magnetism.

Newton (1642 – 1727): The course of the planets around the sun is determined by gravity, which is a force that acts at a distance. Light consists of corpuscles; ordinary matter is made of larger corpuscles. Space and time are absolute and uniform.

Rutherford (1871 – 1937), Bohr (1885 – 1962), and others: The atom has a center (nucleus), which consists of two elemental particles, the neutron and proton.

Einstein (1879 – 1955): The universe is neither expanding nor shrinking.

That’s just a small fraction of the mistaken and incomplete theories that have held sway in the field of physics. There are many more such mistakes and lacunae in the other natural sciences: biology, chemistry, and earth science — each of which, like physics, has many branches. And in all of the branches there are many unresolved questions. For example, the Standard Model of particle physics, despite its complexity, is known to be incomplete. And it is thought (by some) to be unduly complex; that is, there may be a simpler underlying structure waiting to be discovered.

Given all of this, it is grossly presumptuous to claim that climate science – to take a salient example — is “settled” when the phenomena that it encompasses are so varied, complex, often poorly understood, and often given short shrift (e.g., the effects of solar radiation on the intensity of cosmic radiation reaching Earth, which affects low-level cloud formation, which affects atmospheric temperature and precipitation).

Anyone who says that any aspect of science is “settled” is either ignorant, stupid, or a freighted with a political agenda. Anyone who says that “science is real” is merely parroting an empty slogan.

Matt Ridley (quoted by Judith Curry) explains:

In a lecture at Cornell University in 1964, the physicist Richard Feynman defined the scientific method. First, you guess, he said, to a ripple of laughter. Then you compute the consequences of your guess. Then you compare those consequences with the evidence from observations or experiments. “If [your guess] disagrees with experiment, it’s wrong. In that simple statement is the key to science. It does not make a difference how beautiful the guess is, how smart you are, who made the guess or what his name is…it’s wrong….

In general, science is much better at telling you about the past and the present than the future. As Philip Tetlock of the University of Pennsylvania and others have shown, forecasting economic, meteorological or epidemiological events more than a short time ahead continues to prove frustratingly hard, and experts are sometimes worse at it than amateurs, because they overemphasize their pet causal theories….

Peer review is supposed to be the device that guides us away from unreliable heretics. Investigations show that peer review is often perfunctory rather than thorough; often exploited by chums to help each other; and frequently used by gatekeepers to exclude and extinguish legitimate minority scientific opinions in a field.

Herbert Ayres, an expert in operations research, summarized the problem well several decades ago: “As a referee of a paper that threatens to disrupt his life, [a professor] is in a conflict-of-interest position, pure and simple. Unless we’re convinced that he, we, and all our friends who referee have integrity in the upper fifth percentile of those who have so far qualified for sainthood, it is beyond naive to believe that censorship does not occur.” Rosalyn Yalow, winner of the Nobel Prize in medicine, was fond of displaying the letter she received in 1955 from the Journal of Clinical Investigation noting that the reviewers were “particularly emphatic in rejecting” her paper.

The health of science depends on tolerating, even encouraging, at least some disagreement. In practice, science is prevented from turning into religion not by asking scientists to challenge their own theories but by getting them to challenge each other, sometimes with gusto.

As I said, there is no such thing as “settled science”. Real science is a vast realm of unsettled uncertainty. Newton put it thus:

I do not know what I may appear to the world, but to myself I seem to have been only like a boy playing on the seashore, and diverting myself in now and then finding a smoother pebble or a prettier shell than ordinary, whilst the great ocean of truth lay all undiscovered before me.

Certainty is the last refuge of a person whose mind is closed to new facts and new ways of looking at old facts.

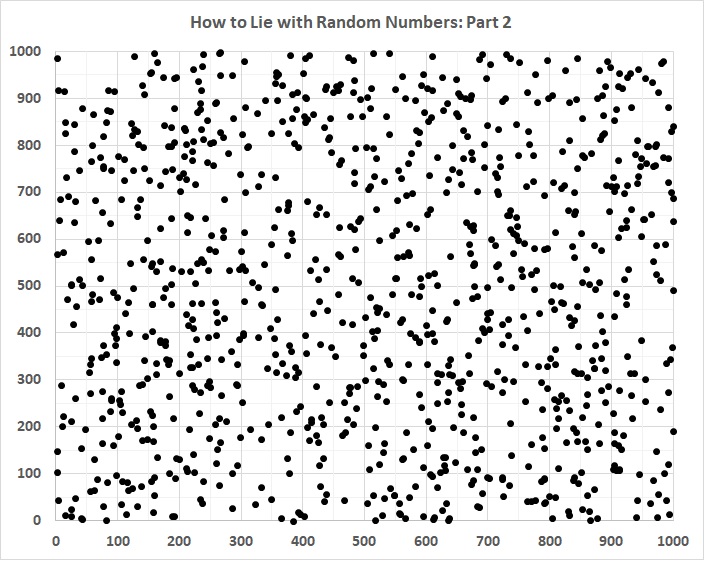

How uncertain is the real world, especially the world of events yet to come? Consider a simple, three-parameter model in which event C depends on the occurrence of event B, which depends on the occurrence of event A; in which the value of the outcome is the summation of the values of the events that occur; and in which value of each event is binary – a value of 1 if it happens, 0 if it doesn’t happen. Even in a simple model like that, there is a wide range of possible outcomes; thus:

A doesn’t occur (B and C therefore don’t occur) = 0.

A occurs but B fails to occur (and C therefore doesn’t occur) = 1.

A occurs, B occurs, but C fails to occur = 2.

A occurs, B occurs, and C occurs = 3.

Even when A occurs, subsequent events (or non-events) will yield final outcomes ranging in value from 1 to 3 times 1. A factor of 3 is a big deal. It’s why .300 hitters make millions of dollars a year and .100 hitters sell used cars.

Let’s leave it at that and move on.