BACKGROUND

A few weeks ago I posted “A Bayesian Puzzle”. I took it down because Bayesianism warranted more careful treatment than I had given it. But while the post was live at Ricochet (where I cross-posted in September-November), I had an exchange with a reader who is an obdurate believer in single-event probabilities, such as “the probability of heads on the next coin flip is 50 percent” and “the probability of 12 on the next roll of a pair of dice is 1/18”. That wasn’t the first such exchange of its kind that I’ve had; “Some Thoughts about Probability” reports an earlier and more thoughtful exchange with a believer in single-event probabilities.

DISCUSSION

A believer in single-event probabilities takes the view that a single flip of a coin or roll of dice has a probability. I do not. A probability represents the frequency with which an outcome occurs over the very long run, and it is only an average that conceals random variations.

The outcome of a single coin flip can’t be reduced to a percentage or probability. It can only be described in terms of its discrete, mutually exclusive possibilities: heads (H) or tails (T). The outcome of a single roll of a die or pair of dice can only be described in terms of the number of points that may come up, 1 through 6 or 2 through 12.

Yes, the expected frequencies of H, T, and and various point totals can be computed by simple mathematical operations. But those are only expected frequencies. They say nothing about the next coin flip or dice roll, nor do they more than approximate the actual frequencies that will occur over the next 100, 1,000, or 10,000 such events.

Of what value is it to know that the probability of H is 0.5 when H fails to occur in 11 consecutive flips of a fair coin? Of what value is it to know that the probability of rolling a 7 is 0.167 — meaning that 7 comes up every 6 rolls, on average — when 7 may not appear for 56 consecutive rolls? These examples are drawn from simulations of 10,000 coin flips and 1,000 dice rolls. They are simulations that I ran once — not simulations that I cherry-picked from many runs. (The Excel file is at https://drive.google.com/open?id=1FABVTiB_qOe-WqMQkiGFj2f70gSu6a82 — coin flips are on the first tab, dice rolls are on the second tab.)

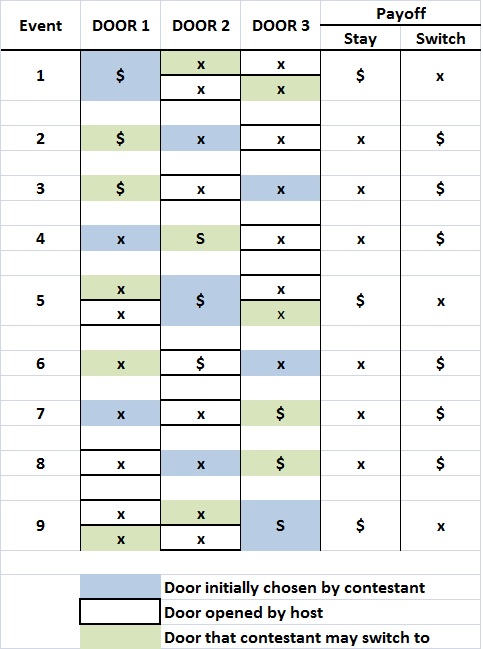

Let’s take another example, which is more interesting, and has generated much controversy of the years. It’s the Monty Hall problem,

a brain teaser, in the form of a probability puzzle, loosely based on the American television game show Let’s Make a Deal and named after its original host, Monty Hall. The problem was originally posed (and solved) in a letter by Steve Selvin to the American Statistician in 1975…. It became famous as a question from a reader’s letter quoted in Marilyn vos Savant’s “Ask Marilyn” column in Parade magazine in 1990 … :

Suppose you’re on a game show, and you’re given the choice of three doors: Behind one door is a car; behind the others, goats. You pick a door, say No. 1, and the host, who knows what’s behind the doors, opens another door, say No. 3, which has a goat. He then says to you, “Do you want to pick door No. 2?” Is it to your advantage to switch your choice

Vos Savant’s response was that the contestant should switch to the other door…. Under the standard assumptions, contestants who switch have a 2/3 chance of winning the car, while contestants who stick to their initial choice have only a 1/3 chance.

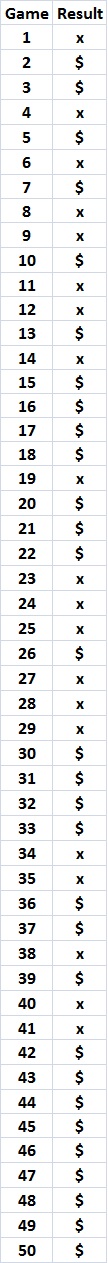

Vos Savant’s answer is correct, but only if the contestant is allowed to play an unlimited number of games. A player who adopts a strategy of “switch” in every game will, in the long run, win about 2/3 of the time (explanation here). That is, the player has a better chance of winning if he chooses “switch” rather than “stay”.

Read the preceding paragraph carefully and you will spot the logical defect that underlies the belief in single-event probabilities: The long-run winning strategy (“switch”) is transformed into a “better chance” to win a particular game. What does that mean? How does an average frequency of 2/3 improve one’s chances of winning a particular game? It doesn’t. As I show here, game results are utterly random; that is, the average frequency of 2/3 has no bearing on the outcome of a single game.

I’ll try to drive the point home by returning to the coin-flip game, with money thrown into the mix. A $1 bet on H means a gain of $1 if H turns up, and a loss of $1 if T turns up. The expected value of the bet — if repeated over a very large number of trials — is zero. The bettor expects to win and lose the same number of times, and to walk away no richer or poorer than when he started. And for a very large number of games, the better will walk away approximately (but not necessarily exactly) neither richer nor poorer than when he started. How many games? In the simulation of 10,000 games mentioned earlier, H occurred 50.6 percent of the time. A very large number of games is probably at least 100,000.

Let us say, for the sake of argument, that a bettor has played 100,00 coin-flip games at $1 a game and come out exactly even. What does that mean for the play of the next game? Does it have an expected value of zero?

To see why the answer is “no”, let’s make it interesting and say that the bet on the next game — the next coin flip — is $10,000. The size of the bet should wonderfully concentrate the bettor’s mind. He should now see the situation for what it really is: There are two possible outcomes, and only one of them will be realized. An average of the two outcomes is meaningless. The single coin flip doesn’t have a “probability” of 0.5 H and 0.5 T and an “expected payoff” of zero. The coin will come up either H or T, and the bettor will either lose $10,000 or win $10,000.

To repeat: The outcome of a single coin flip doesn’t have an expected value for the bettor. It has two possible values, and the bettor must decide whether he is willing to lose $10,000 on the single flip of a coin.

By the same token (or coin), the outcome of a single roll of a pair of dice doesn’t have a 1-in-6 probability of coming up 7. It has 36 possible outcomes and 11 possible point totals, and the bettor must decide how much he is willing to lose if he puts his money on the wrong combination or outcome.

CONCLUSION

It is a logical fallacy to ascribe a probability to a single event. A probability represents the observed or computed average value of a very large number of like events. A single event cannot possess that average value. A single event has a finite number of discrete and mutually exclusive outcomes. Those outcomes will not “average out” — only one of them will obtain, like Schrödinger’s cat.

To say that the outcomes will average out — which is what a probability implies — is tantamount to saying that Jack Sprat and his wife were neither skinny nor fat because their body-mass indices averaged to a normal value. It is tantamount to saying that one can’t drown by walking across a pond with an average depth of 1 foot, when that average conceals the existence of a 100-foot-deep hole.

Related posts:

Understanding the Monty Hall Problem

The Compleat Monty Hall Problem

Some Thoughts about Probability

My War on the Misuse of Probability

Scott Adams Understands Probability