A correspondent sent me some links to writings of Nicholas Nassim Taleb. One of them is “The Intellectual Yet Idiot,” in which Taleb makes some acute observations; for example:

What we have been seeing worldwide, from India to the UK to the US, is the rebellion against the inner circle of no-skin-in-the-game policymaking “clerks” and journalists-insiders, that class of paternalistic semi-intellectual experts with some Ivy league, Oxford-Cambridge, or similar label-driven education who are telling the rest of us 1) what to do, 2) what to eat, 3) how to speak, 4) how to think… and 5) who to vote for.

But the problem is the one-eyed following the blind: these self-described members of the “intelligentsia” can’t find a coconut in Coconut Island, meaning they aren’t intelligent enough to define intelligence hence fall into circularities — but their main skill is capacity to pass exams written by people like them….

The Intellectual Yet Idiot is a production of modernity hence has been accelerating since the mid twentieth century, to reach its local supremum today, along with the broad category of people without skin-in-the-game who have been invading many walks of life. Why? Simply, in most countries, the government’s role is between five and ten times what it was a century ago (expressed in percentage of GDP)….

The IYI pathologizes others for doing things he doesn’t understand without ever realizing it is his understanding that may be limited. He thinks people should act according to their best interests and he knows their interests, particularly if they are “red necks” or English non-crisp-vowel class who voted for Brexit. When plebeians do something that makes sense to them, but not to him, the IYI uses the term “uneducated”. What we generally call participation in the political process, he calls by two distinct designations: “democracy” when it fits the IYI, and “populism” when the plebeians dare voting in a way that contradicts his preferences….

The IYI has been wrong, historically, on Stalinism, Maoism, GMOs, Iraq, Libya, Syria, lobotomies, urban planning, low carbohydrate diets, gym machines, behaviorism, transfats, freudianism, portfolio theory, linear regression, Gaussianism, Salafism, dynamic stochastic equilibrium modeling, housing projects, selfish gene, Bernie Madoff (pre-blowup) and p-values. But he is convinced that his current position is right.

That’s all yummy red meat to a person like me, especially in the wake of November 8, which Taleb’s piece predates. But the last paragraph quoted above reminded me that I had read something critical about a paper in which Taleb applies the precautionary principle. So I found the paper, which is by Taleb (lead author) and several others. This is from the abstract:

Here we formalize PP [the precautionary principle], placing it within the statistical and probabilistic structure of “ruin” problems, in which a system is at risk of total failure, and in place of risk we use a formal “fragility” based approach. In these problems, what appear to be small and reasonable risks accumulate inevitably to certain irreversible harm….

Our analysis makes clear that the PP is essential for a limited set of contexts and can be used to justify only a limited set of actions. We discuss the implications for nuclear energy and GMOs. GMOs represent a public risk of global harm, while harm from nuclear energy is comparatively limited and better characterized. PP should be used to prescribe severe limits on GMOs. [“The Precautionary Principle (With Application to the Genetic Modification of Organisms),” Extreme Risk Initiative – NYU School of Engineering Working Paper Series]

Jon Entine demurs:

Taleb has recently become the darling of GMO opponents. He and four colleagues–Yaneer Bar-Yam, Rupert Read, Raphael Douady and Joseph Norman–wrote a paper, The Precautionary Principle (with Application to the Genetic Modification of Organisms, released last May and updated last month, in which they claim to bring risk theory and the Precautionary Principle to the issue of whether GMOS might introduce “systemic risk” into the environment….

The crux of his claims: There is no comparison between conventional selective breeding of any kind, including mutagenesis which requires the radiation or chemical dousing of seeds (and has resulted in more than 2500 varieties of fruits, vegetables, and nuts, almost all available in organic varieties) versus what his calls the top-down engineering that occurs when a gene is taken from an organism and transferred to another (ignoring that some forms of genetic engineering, including gene editing, do not involve gene transfers). Taleb goes on to argue that the chance of ecocide, or the destruction of the environment and potentially of humans, increases incrementally with each additional transgenic trait introduced into the environment. In other words, in his mind genetic engineering is a classic “black swan” scenario.

Neither Taleb nor any of the co-authors has any background in genetics or agriculture or food, or even familiarity with the Precautionary Principle as it applies to biotechology, which they liberally invoke to justify their positions….

One of the paper’s central points displays his clear lack of understanding of modern crop breeding. He claims that the rapidity of the genetic changes using the rDNA technique does not allow the environment to equilibrate. Yet rDNA techniques are actually among the safest crop breeding techniques in use today because each rDNA crop represents only 1-2 genetic changes that are more thoroughly tested than any other crop breeding technique. The number of genetic changes caused by hybridization or mutagensis techniques are orders of magnitude higher than rDNA methods. And no testing is required before widespread monoculture-style release. Even selective breeding likely represents a more rapid change than rDNA techniques because of the more rapid employment of the method today.

In essence. Taleb’s ecocide argument applies just as much to other agricultural techniques in both conventional and organic agriculture. The only difference between GMOs and other forms of breeding is that genetic engineering is closely evaluated, minimizing the potential for unintended consequences. Most geneticists–experts in this field as opposed to Taleb–believe that genetic engineering is far safer than any other form of breeding.

Moreover, as Maxx Chatsko notes, the natural environment has encountered new traits from unthinkable events (extremely rare occurrences of genetic transplantation across continents, species and even planetary objects, or extremely rare single mutations that gave an incredible competitive advantage to a species or virus) that have led to problems and genetic bottlenecks in the past — yet we’re all still here and the biosphere remains tremendously robust and diverse. So much for Mr. Doomsday. [“Is Nassim Taleb a ‘Dangerous Imbecile’ or on [sic] the Pay of Anti-GMO Activists?” Genetic Literacy Project, November 13, 2014 — see footnote for an explanation of “dangerous imbecile”]

Gregory Conko also demurs:

The paper received a lot of attention in scientific circles, but was roundly dismissed for being long on overblown rhetoric but conspicuously short on any meaningful reference to the scientific literature describing the risks and safety of genetic engineering, and for containing no understanding of how modern genetic engineering fits within the context of centuries of far more crude genetic modification of plants, animals, and microorganisms.

Well, Taleb is back, this time penning a short essay published on The New York Times’s DealB%k blog with co-author Mark Spitznagel. The authors try to draw comparisons between the recent financial crisis and GMOs, claiming the latter represent another “Too Big to Fail” crisis in waiting. Unfortunately, Taleb’s latest contribution is nothing more than the same sort of evidence-free bombast posing as thoughtful analysis. The result is uninformed and/or unintelligible gibberish….

“In nature, errors stay confined and, critically, isolated.” Ebola, anyone? Avian flu? Or, for examples that are not “in nature” but the “small step” changes Spitznagel and Taleb seem to prefer, how about the introduction of hybrid rice plants into parts of Asia that have led to widespread outcrossing to and increased weediness in wild red rices? Or kudzu? Again, this seems like a bold statement designed to impress. But it is completely untethered to any understanding of what actually occurs in nature or the history of non-genetically engineered crop introductions….

“[T]he risk of G.M.O.s are more severe than those of finance. They can lead to complex chains of unpredictable changes in the ecosystem, while the methods of risk management with G.M.O.s — unlike finance, where some effort was made — are not even primitive.” Again, the authors evince no sense that they understand how extensively breeders have been altering the genetic composition of plants and other organisms for the past century, or what types of risk management practices have evolved to coincide.

In fact, compared with the wholly voluntary (and yet quite robust) risk management practices that are relied upon to manage introductions of mutant varieties, somaclonal variants, wide crosses, and the products of cell fusion, the legally obligatory risk management practices used for genetically engineered plant introductions are vastly over-protective.

In the end, Spitznagel and Taleb’s argument boils down to a claim that ecosystems are complex and rDNA modification seems pretty mysterious to them, so nobody could possibly understand it. Until they can offer some arguments that take into consideration what we actually know about genetic modification of organisms (by various methods) and why we should consider rDNA modification uniquely risky when other methods result in even greater genetic changes, the rest of us are entitled to ignore them. [“More Unintelligible Gibberish on GMO Risks from Nicholas Nassim Taleb,” Competitive Enterprise Institute, July 16, 2015]

And despite my enjoyment of Taleb’s red-meat commentary about IYIs, I have to admit that I’ve had my fill of Taleb’s probabilistic gibberish. This is from “Fooled by Non-Randomness,” which I wrote seven years ago about Taleb’s Fooled by Randomness:

The first reason that I am unfooled by Fooled… might be called a meta-reason. Standing back from the book, I am able to perceive its essential defect: According to Taleb, human affairs — especially economic affairs, and particularly the operations of financial markets — are dominated by randomness. But if that is so, only a delusional person can truly claim to understand the conduct of human affairs. Taleb claims to understand the conduct of human affairs. Taleb is therefore either delusional or omniscient.

Given Taleb’s humanity, it is more likely that he is delusional — or simply fooled, but not by randomness. He is fooled because he proceeds from the assumption of randomness instead of exploring the ways and means by which humans are actually capable of shaping events. Taleb gives no more than scant attention to those traits which, in combination, set humans apart from other animals: self-awareness, empathy, forward thinking, imagination, abstraction, intentionality, adaptability, complex communication skills, and sheer brain power. Given those traits (in combination) the world of human affairs cannot be random. Yes, human plans can fail of realization for many reasons, including those attributable to human flaws (conflict, imperfect knowledge, the triumph of hope over experience, etc.). But the failure of human plans is due to those flaws — not to the randomness of human behavior.

What Taleb sees as randomness is something else entirely. The trajectory of human affairs often is unpredictable, but it is not random. For it is possible to find patterns in the conduct of human affairs, as Taleb admits (implicitly) when he discusses such phenomena as survivorship bias, skewness, anchoring, and regression to the mean….

[R]andom events as events which are repeatable, convergent on a limiting value, and truly patternless over a large number of repetitions. Evolving economic events (e.g., stock-market trades, economic growth) are not alike (in the way that dice are, for example), they do not converge on limiting values, and they are not patternless, as I will show.

In short, Taleb fails to demonstrate that human affairs in general or financial markets in particular exhibit randomness, properly understood….

A bit of unpredictability (or “luck”) here and there does not make for a random universe, random lives, or random markets. If a bit of unpredictability here and there dominated our actions, we wouldn’t be here to talk about randomness — and Taleb wouldn’t have been able to marshal his thoughts into a published, marketed, and well-sold book.

Human beings are not “designed” for randomness. Human endeavors can yield unpredictable results, but those results do not arise from random processes, they derive from skill or the lack therof, knowledge or the lack thereof (including the kinds of self-delusions about which Taleb writes), and conflicting objectives….

No one believes that Ty Cobb, Babe Ruth, Ted Williams, Christy Matthewson, Warren Spahn, and the dozens of other baseball players who rank among the truly great were lucky. No one believes that the vast majority of the the tens of thousands of minor leaguers who never enjoyed more than the proverbial cup of coffee were unlucky. No one believes that the vast majority of the millions of American males who never made it to the minor leagues were unlucky. Most of them never sought a career in baseball; those who did simply lacked the requisite skills.

In baseball, as in life, “luck” is mainly an excuse and rarely an explanation. We prefer to apply “luck” to outcomes when we don’t like the true explanations for them. In the realm of economic activity and financial markets, one such explanation … is the exogenous imposition of governmental power….

Given what I have said thus far, I find it almost incredible that anyone believes in the randomness of financial markets. It is unclear where Taleb stands on the random-walk hypothesis, but it is clear that he believes financial markets to be driven by randomness. Yet, contradictorily, he seems to attack the efficient-markets hypothesis (see pp. 61-62), which is the foundation of the random-walk hypothesis.

What is the random-walk hypothesis? In brief, it is this: Financial markets are so efficient that they instantaneously reflect all information bearing on the prices of financial instruments that is then available to persons buying and selling those instruments….

When we step back from day-to-day price changes, we are able to see the underlying reality: prices (instead of changes) and price trends (which are the opposite of randomness). This (correct) perspective enables us to see that stock prices (on the whole) are not random, and to identify the factors that influence the broad movements of the stock market. For one thing, if you look at stock prices correctly, you can see that they vary cyclically….

[But] the long-term trend of the stock market (as measured by the S&P 500) is strongly correlated with GDP. And broad swings around that trend can be traced to governmental intervention in the economy….

The wild swings around the trend line began in the uncertain aftermath of World War I, which saw the imposition of production and price controls. The swings continued with the onset of the Great Depression (which can be traced to governmental action), the advent of the anti-business New Deal, and the imposition of production and price controls on a grand scale during World War II. The next downswing was occasioned by the culmination the Great Society, the “oil shocks” of the early 1970s, and the raging inflation that was touched off by — you guessed it — government policy. The latest downswing is owed mainly to the financial crisis born of yet more government policy: loose money and easy loans to low-income borrowers.

And so it goes, wildly but predictably enough if you have the faintest sense of history. The moral of the story: Keep your eye on government and a hand on your wallet.

There is randomness in economic affairs, but they are not dominated by randomness. They are dominated by intentions, including especially the intentions of the politicians and bureaucrats who run governments. Yet, Taleb has no space in his book for the influence of their deeds economic activity and financial markets.

Taleb is right to disparage those traders (professional and amateur) who are lucky enough to catch upswings, but are unprepared for downswings. And he is right to scoff at their readiness to believe that the current upswing (uniquely) will not be followed by a downswing (“this time it’s different”).

But Taleb is wrong to suggest that traders are fooled by randomness. They are fooled to some extent by false hope, but more profoundly by their inablity to perceive the economic damage wrought by government. They are not alone of course; most of the rest of humanity shares their perceptual failings.

Taleb, in that respect, is only somewhat different than most of the rest of humanity. He is not fooled by false hope, but he is fooled by non-randomness — the non-randomness of government’s decisive influence on economic activity and financial markets. In overlooking that influence he overlooks the single most powerful explanation for the behavior of markets in the past 90 years.

I followed up a few days later with “Randomness Is Over-Rated“:

What we often call random events in human affairs really are non-random events whose causes we do not and, in some cases, cannot know. Such events are unpredictable, but they are not random….

Randomness … is found in (a) the results of non-intentional actions, where (b) we lack sufficient knowledge to understand the link between actions and results.

It is unreasonable to reduce intentional human behavior to probabilistic formulas. Humans don’t behave like dice, roulette balls, or similar “random” devices. But that is what Taleb (and others) do when they ascribe unusual success in financial markets to “luck.”…

I say it again: The most successful professionals are not successful because of luck, they are successful because of skill. There is no statistically predetermined percentage of skillful traders; the actual percentage depends on the skills of entrants and their willingness (if skillful) to make a career of it….

The outcomes of human endeavor are skewed because the distribution of human talents is skewed. It would be surprising to find as many as one-half of traders beating the long-run average performance of the various markets in which they operate….

[Taleb] sees an a priori distribution of “winners” and losers,” where “winners” are determined mainly by luck, not skill. Moreover, we — the civilians on the sidelines — labor under the false impression about the relative number of “winners”….

[T]here are no “odds” favoring success — even in financial markets. Financial “players” do what they can do, and most of them — like baseball players — simply don’t have what it takes for great success. Outcomes are skewed, not because of (fictitious) odds but because talent is distributed unevenly.

The real lesson … is not to assume that the “winners” are merely lucky. No, the real lesson is to seek out those “winners” who have proven their skills over a long period of time, through boom and bust and boom and bust.

Those who do well, over the long run, do not do so merely because they have survived. They have survived because they do well.

There’s much more, and you should read the whole thing(s), as they say.

I turn now to Taleb’s version of the precautionary principle, which seems tailored to support the position that Taleb wants to support, namely, that GMOs should be banned. Who gets to decide what “threats” should be included in the “limited set of contexts” where the PP applies? Taleb, of course. Taleb has excreted a circular pile of horse manure; thus:

- The PP applies only where I (Taleb) say it applies.

- I (Taleb) say that the PP applies to GMOs.

- Therefore, the PP applies to GMOs.

I (the proprietor of this blog) say that the PP ought to apply to the works of Nicholas Nassim Taleb. They ought to be banned because they may perniciously influence gullible readers.

I’ll justify my facetious proposal to ban Taleb’s writings by working my way through the “logic” of what Taleb calls the non-naive version of the PP, on which he bases his anti-GMO stance. Here are the main points of Taleb’s argument, extracted from “The Precautionary Principle (With Application to the Genetic Modification of Organisms).” Taleb’s statements (with minor, non-substantive elisions) are in roman type, followed by my comments in bold type.

The purpose of the PP is to avoid a certain class of what, in probability and insurance, is called “ruin” problems. A ruin problem is one where outcomes of risks have a non-zero probability of resulting in unrecoverable losses. An often-cited illustrative case is that of a gambler who loses his entire fortune and so cannot return to the game. In biology, an example would be a species that has gone extinct. For nature, “ruin” is ecocide: an irreversible termination of life at some scale, which could be planetwide.

The extinction of a species is ruinous only if one believes that species shouldn’t become extinct. But they do, because that’s the way nature works. Ruin, as Taleb means it, is avoidable, self-inflicted, and (at some point) irreversibly catastrophic. Let’s stick to that version of it.

Our concern is with public policy. While an individual may be advised to not “bet the farm,” whether or not he does so is generally a matter of individual preferences. Policy makers have a responsibility to avoid catastrophic harm for society as a whole; the focus is on the aggregate, not at the level of single individuals, and on globalsystemic, not idiosyncratic, harm. This is the domain of collective “ruin” problems.

This assumes that government can do something about a potentially catastrophic harm — or should do something about it. The Great Depression, for example, began as a potentially catastrophic harm that government made into a real catastrophic harm (for millions of Americans, though not all of them) and prolonged through its actions. Here Taleb commits the nirvana fallacy, by implicitly ascribing to government the power to anticipate harm without making a Type I or Type II error, and then to take appropriate and effective action to prevent or ameliorate that harm.

By the ruin theorems, if you incur a tiny probability of ruin as a “one-off” risk, survive it, then do it again (another “one-off” deal), you will eventually go bust with probability 1. Confusion arises because it may seem that the “one-off” risk is reasonable, but that also means that an additional one is reasonable. This can be quantified by recognizing that the probability of ruin approaches 1 as the number of exposures to individually small risks, say one in ten thousand, increases. For this reason a strategy of risk taking is not sustainable and we must consider any genuine risk of total ruin as if it were inevitable.

But you have to know in advance that a particular type of risk will be ruinous. Which means that — given the uncertainty of such knowledge — the perception of (possible) ruin is in the eye of the assessor. (I’ll have a lot more to say about uncertainty.)

A way to formalize the ruin problem in terms of the destructive consequences of actions identifies harm as not about the amount of destruction, but rather a measure of the integrated level of destruction over the time it persists. When the impact of harm extends to all future times, i.e. forever, then the harm is infinite. When the harm is infinite, the product of any non-zero probability and the harm is also infinite, and it cannot be balanced against any potential gains, which are necessarily finite.

As discussed below, the concept of probability is inapplicable here. Further, and granting the use of probability for the sake of argument, Taleb’s contention holds only if there’s no doubt that the harm will be infinite, that is, totally ruinous. If there’s room for doubt, there’s room for disagreement as to the extent of the harm (if any) and the value of attempting to counter it (or not). Otherwise, it would be “rational” to devote as much as the entire economic output of the world to combat so-called catastrophic anthropogenic global warming (CAGW) because some “expert” says that there’s a non-zero probability of its occurrence. In practical terms, the logic of such a policy is that if you’re going to die of heat stroke, you might as well do it sooner rather than later — which would be one of the consequences of, say, banning the use of fossil fuels. Other consequences would be freezing to death if you live in a cold climate and starving to death because foodstuffs couldn’t be grown, harvested, processed, or transported. Those are also infinite harms, and they arise from Taleb’s preferred policy of acting on little information about a risk because (in someone’s view) it could lead to infinite harm. There’s a relevant cost-benefit analysis for you.

Because the “cost” of ruin is effectively infinite, costbenefit analysis (in which the potential harm and potential gain are multiplied by their probabilities and weighed against each other) is no longer a useful paradigm.

Here, Taleb displays profound ignorance in two fields: economics and probability. His ignorance of economics might be excusable, but his ignorance of probability isn’t, inasmuch as he’s made a name for himself (and probably a lot of money) by parading his “sophisticated” understanding of it in books and on the lecture circuit.

Regarding the economics of cost-benefit analysis (CBA), it’s properly an exercise for individual persons and firms, not governments. When a government undertakes CBA, it implicitly (and arrogantly) assumes that the costs of a project (which are defrayed in the end by taxpayers) can be weighed against the monetary benefits of the project (which aren’t distributed in proportion to the costs and are often deliberately distributed so that taxpayers bear most of the costs and non-taxpayers reap most of the benefits).

Regarding probability, Taleb quite wrongly insists on ascribing probabilities to events that might (or might not) occur in the future. A probability is a statement about a very large number of like events, each of which has an unpredictable (random) outcome. A valid probability is based either on a large number of past “trials” or a mathematical certainty (e.g., a fair coin, tossed a large number of times — 100 or more — will come up heads about half the time and tails about half the time). Probability, properly understood, says nothing about the outcome of an individual future event; that is, it says nothing about what will happen next in a truly random trial, such as a coin toss. Probability certainly says nothing about the occurrence of a unique event. Therefore, Taleb cannot validly assign a probability of “ruin” to a speculative event as little understood (by him) as the effect of GMOs on the world’s food supply.

The non-naive PP bridges the gap between precaution and evidentiary action using the ability to evaluate the difference between local and global risks.

In other words, if there’s a subjective, non-zero probability of CAGW in Taleb’s mind, that probability should outweigh evidence about the wrongness of a belief in CAGW. And such evidence is ample, not only in the various scientific fields that impinge on climatology, but also in the failure of almost all climate models to predict the long pause in what’s called global warming. Ah, but “almost all” — in Taleb’s mind — means that there’s a non-zero probability of CAGW. It’s the “heads I win, tails you lose” method of gambling on the flip of a coin.

Here’s another way of putting it: Taleb turns the scientific method upside down by rejecting the null hypothesis (e.g., no CAGW) on the basis of evidence that confirms it (no observable rise in temperatures approaching the predictions of CAGW theory) because a few predictions happened to be close to the truth. Taleb, in his guise as the author of Fooled by Randomness, would correctly label such predictions as lucky.

While evidentiary approaches are often considered to reflect adherence to the scientific method in its purest form, it is apparent that these approaches do not apply to ruin problems. In an evidentiary approach to risk (relying on evidence-based methods), the existence of a risk or harm occurs when we experience that risk or harm. In the case of ruin, by the time evidence comes it will by definition be too late to avoid it. Nothing in the past may predict one fatal event. Thus standard evidence-based approaches cannot work.

It’s misleading to say that “by the time the evidence comes it will be by definition too late to avoid it.” Taleb assumes, without proof, that the linkage between GMOs, say, and a worldwide food crisis will occur suddenly and without warning (or sufficient warning), as if GMOs will be everywhere at once and no one will have been paying attention to their effects as their use spread. That’s unlikely given broad disparities in the distribution of GMOs, the state of vigilance about them, and resistance to them in many quarters. What Taleb really says is this: Some people (Taleb among them) believe that GMOs pose an existential risk with a probability greater than zero. (Any such “probability” is fictional, as discussed above.) Therefore, the risk of ruin from GMOs is greater than zero and ruin is inevitable. By that logic, there must be dozens of certain-death scenarios for the planet. Why is Taleb wasting his time on GMOs, which are small potatoes compared with, say, asteroids? And why don’t we just slit our collective throat and get it over with?

Since there are mathematical limitations to predictability of outcomes in a complex system, the central issue to determine is whether the threat of harm is local (hence globally benign) or carries global consequences. Scientific analysis can robustly determine whether a risk is systemic, i.e. by evaluating the connectivity of the system to propagation of harm, without determining the specifics of such a risk. If the consequences are systemic, the associated uncertainty of risks must be treated differently than if it is not. In such cases, precautionary action is not based on direct empirical evidence but on analytical approaches based upon the theoretical understanding of the nature of harm. It relies on probability theory without computing probabilities. The essential question is whether or not global harm is possible or not.

More of the same.

Everything that has survived is necessarily non-linear to harm. If I fall from a height of 10 meters I am injured more than 10 times than if I fell from a height of 1 meter, or more than 1000 times than if I fell from a height of 1 centimeter, hence I am fragile. In general, every additional meter, up to the point of my destruction, hurts me more than the previous one.

This explains the necessity of considering scale when invoking the PP. Polluting in a small way does not warrant the PP because it is essentially less harmful than polluting in large quantities, since harm is non-linear.

This is just a way of saying that there’s a threshold of harm, and harm becomes ruinous when the threshold is surpassed. Which is true in some cases, but there’s a wide variety of cases and a wide range of thresholds. This is just a framing device meant to set the reader up for the sucker punch, which is that the widespread use of GMOs will be ruinous, at some undefined point. Well, we’ve been hearing that about CAGW for twenty years, and the undefined point keeps receding into the indefinite future.

Thus, when impacts extend to the size of the system, harm is severely exacerbated by non-linear effects. Small impacts, below a threshold of recovery, do not accumulate for systems that retain their structure. Larger impacts cause irreversible damage.We should be careful, however, of actions that may seem small and local but then lead to systemic consequences.

“When impacts extend to the size of the system” means “when ruin is upon us there is ruin.” It’s a tautology without empirical content.

An increase in uncertainty leads to an increase in the probability of ruin, hence “skepticism” is that its impact on decisions should lead to increased, not decreased conservatism in the presence of ruin. Hence skepticism about climate models should lead to more precautionary policies.

This is through the looking glass and into the wild blue yonder. More below.

The rest of the paper is devoted to two things. One of them is making the case against GMOs because they supposedly exemplify the kind of risk that’s covered by the non-naive PP. I’ll let Jon Entine and Gregory Conko (quoted above) speak for me on that issue.

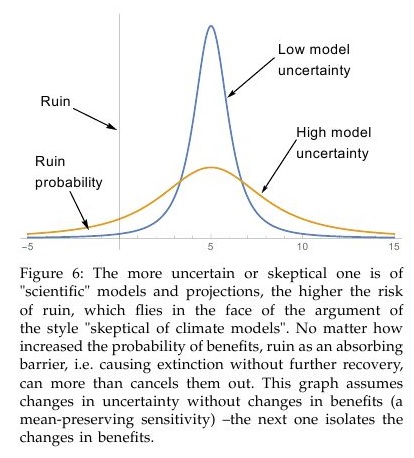

The other thing that the rest of the paper does is to spell out and debunk ten supposedly fallacious arguments against PP. I won’t go into them here because Taleb’s version of PP is self-evidently fallacious. The fallacy can be found in figure 6 of the paper:

Taleb pulls an interesting trick here — or perhaps he exposes his fundamental ignorance about probability. Let’s take it a step at a time:

- Figure 6 depicts two normal distributions. But what are they normal distributions of? Let’s say that they’re supposed to be normal distributions of the probability of the occurrence of CAGW (however that might be defined) by a certain date, in the absence of further steps to mitigate it (e.g., banning the use of fossil fuels forthwith). There’s no known normal distribution of the probability of CAGW because, as discussed above, CAGW is a unique, hypothesized (future) event which cannot have a probability. It’s not 100 tosses of a fair coin.

- The curves must therefore represent something about models that predict the arrival of CAGW by a certain date. Perhaps those predictions are normally distributed, though that has nothing to do with the “probability” of CAGW if all of the predictions are wrong.

- The two curves shown in Taleb’s figure 6 are meant (by Taleb) to represent greater and lesser certainty about the arrival of CAGW (or the ruinous scenario of his choice), as depicted by climate models.

- But if models are adjusted or built anew in the face of evidence about their shortcomings (i.e., their gross overprediction of temperatures since 1998), the newer models (those with presumably greater certainty) will have two characteristics: (a) The tails will be thinner, as Taleb suggests. (b) The mean will shift to the left or right; that is they won’t have the same mean.

- In the case of CAGW, the mean will shift to the right because it’s already known that extant models overstate the risk of “ruin.” The left tail of the distribution of the new models will therefore shift to the right, further reducing the “probability” of CAGW.

- Taleb’s trick is to ignore that shift and, further, to implicitly assume that the two distributions coexist. By doing that he can suggest that there’s an “increase in uncertainty [that] leads to an increase in the probability of ruin.” In fact, there’s a decrease in uncertainty, and therefore a decrease in the probability of ruin.

I’ll say it again: As evidence is gathered, there is less uncertainty; that is, the high-uncertainty condition precedes the low-uncertainty one. The movement from high uncertainty to low uncertainty would result in the assignment of a lower probability to a catastrophic outcome (assuming, for the moment, that such a probability is meaningful). And that would be a good reason to worry less about the eventuality of the catastrophic outcome. Taleb wants to compare the two distributions, as if the earlier one (based on little evidence) were as valid as the later one (based on additional evidence).

That’s why Taleb counsels against “evidentiary approaches.” In Taleb’s worldview, knowing little about a potential risk to health, welfare, and existence is a good reason to take action with respect to that risk. Therefore, if you know little about the risk, you should act immediately and with all of the resources at your disposal. Why? Because the risk might suddenly cause an irreversible calamity. But that’s not true of CAGW or GMOs. There’s time to gather evidence as to whether there’s truly a looming calamity, and then — if necessary — to take steps to avert it, steps that are more likely to be effective because they’re evidence-based. Further, if there’s not a looming calamity, a tremendous wast of resources will be averted.

It follows from the non-naive PP — as interpreted by Taleb — that all human beings should be sterilized and therefore prevented from procreating. This is so because sometimes just a few human beings — Hitler, Mussolini, and Tojo, for example — can cause wars. And some of those wars have harmed human beings on a nearly global scale. Global sterilization is therefore necessary, to ensure against the birth of new Hitlers, Mussolinis, and Tojos — even if it prevents the birth of new Schweitzers, Salks, Watsons, Cricks, and Mother Teresas.

In other words, the non-naive PP (or Taleb’s version of it) is pseudo-scientific claptrap. It can be used to justify any extreme and nonsensical position that its user wishes to advance. It can be summed up in an Orwellian sentence: There is certainty in uncertainty.

Perhaps this is better: You shouldn’t get out of bed in the morning because you don’t know with certainty everything that will happen to you in the course of the day.

* * *

NOTE: The title of Jon Entine’s blog post, quoted above, refers to Taleb as a “dangerous imbecile.” Here’s Entine’s explanation of that characterization:

If you think the headline of this blog [post] is unnecessarily inflammatory, you are right. It’s an ad hominem way to deal with public discourse, and it’s unfair to Nassim Taleb, the New York University statistician and risk analyst. I’m using it to make a point–because it’s Taleb himself who regularly invokes such ugly characterizations of others….

…Taleb portrays GMOs as a ‘castrophe in waiting’–and has taken to personally lashing out at those who challenge his conclusions–and yes, calling them “imbeciles” or paid shills.

He recently accused Anne Glover, the European Union’s Chief Scientist, and one of the most respected scientists in the world, of being a “dangerous imbecile” for arguing that GM crops and foods are safe and that Europe should apply science based risk analysis to the GMO approval process–views reflected in summary statements by every major independent science organization in the world.

Taleb’s ugly comment was gleefully and widely circulated by anti-GMO activist web sites. GMO Free USA designed a particularly repugnant visual to accompany their post.

Taleb is known for his disagreeable personality–as Keith Kloor at Discover noted, the economist Noah Smith had called Taleb a “vulgar bombastic windbag”, adding, “and I like him a lot”. He has a right to flaunt an ego bigger than the Goodyear blimp. But that doesn’t make his argument any more persuasive.

* * *

Related posts:

“Warmism”: The Myth of Anthropogenic Global Warming

More Evidence against Anthropogenic Global Warming

Yet More Evidence against Anthropogenic Global Warming

Pascal’s Wager, Morality, and the State

Modeling Is Not Science

Fooled by Non-Randomness

Randomness Is Over-Rated

Anthropogenic Global Warming Is Dead, Just Not Buried Yet

Beware the Rare Event

Demystifying Science

Pinker Commits Scientism

AGW: The Death Knell

The Pretence of Knowledge

“The Science Is Settled”

“Settled Science” and the Monty Hall Problem

The Limits of Science, Illustrated by Scientists

Some Thoughts about Probability

Rationalism, Empiricism, and Scientific Knowledge

AGW in Austin?

The “Marketplace” of Ideas

My War on the Misuse of Probability

Ty Cobb and the State of Science

Understanding Probability: Pascal’s Wager and Catastrophic Global Warming

Revisiting the “Marketplace” of Ideas

The Technocratic Illusion

The Precautionary Principle and Pascal’s Wager

AGW in Austin? (II)

Is Science Self-Correcting?