Balderdash is nonsense, to put it succinctly. Less succinctly, balderdash is stupid or illogical talk; senseless rubbish. Rather thoroughly, it is

balls, bull, rubbish, shit, rot, crap, garbage, trash, bunk, bullshit, hot air, tosh, waffle, pap, cobblers, bilge, drivel, twaddle, tripe, gibberish, guff, moonshine, claptrap, hogwash, hokum, piffle, poppycock, bosh, eyewash, tommyrot, horsefeathers, or buncombe.

I have encountered innumerable examples of balderdash in my 35 years of full-time work, 14 subsequent years of blogging, and many overlapping years as an observer of the political scene. This essay documents some of the worst balderdash that I have come across.

THE LIMITS OF SCIENCE

Science (or what too often passes for it) generates an inordinate amount of balderdash. Consider an article in The Christian Science Monitor: “Why the Universe Isn’t Supposed to Exist”, which reads in part:

The universe shouldn’t exist — at least according to a new theory.

Modeling of conditions soon after the Big Bang suggests the universe should have collapsed just microseconds after its explosive birth, the new study suggests.

“During the early universe, we expected cosmic inflation — this is a rapid expansion of the universe right after the Big Bang,” said study co-author Robert Hogan, a doctoral candidate in physics at King’s College in London. “This expansion causes lots of stuff to shake around, and if we shake it too much, we could go into this new energy space, which could cause the universe to collapse.”

Physicists draw that conclusion from a model that accounts for the properties of the newly discovered Higgs boson particle, which is thought to explain how other particles get their mass; faint traces of gravitational waves formed at the universe’s origin also inform the conclusion.

Of course, there must be something missing from these calculations.

“We are here talking about it,” Hogan told Live Science. “That means we have to extend our theories to explain why this didn’t happen.”

No kidding!

Though there’s much more to come, this example should tell you all that you need to know about the fallibility of scientists. If you need more examples, consider these.

MODELS LIE WHEN LIARS MODEL

Not that there’s anything wrong with being wrong, but there’s a great deal wrong with seizing on a transitory coincidence between two variables (CO2 emissions and “global” temperatures in the late 1900s) and spurring a massively wrong-headed “scientific” mania — the mania of anthropogenic global warming.

What it comes down to is modeling, which is simply a way of baking one’s assumptions into a pseudo-scientific mathematical concoction. Any model is dangerous in the hands of a skilled, persuasive advocate. A numerical model is especially dangerous because:

- There is abroad a naïve belief in the authoritativeness of numbers. A bad guess (even if unverifiable) seems to carry more weight than an honest “I don’t know.”

- Relatively few people are both qualified and willing to examine the parameters of a numerical model, the interactions among those parameters, and the data underlying the values of the parameters and magnitudes of their interaction.

- It is easy to “torture” or “mine” the data underlying a numerical model so as to produce a model that comports with the modeler’s biases (stated or unstated).

There are many ways to torture or mine data; for example: by omitting certain variables in favor of others; by focusing on data for a selected period of time (and not testing the results against all the data); by adjusting data without fully explaining or justifying the basis for the adjustment; by using proxies for missing data without examining the biases that result from the use of particular proxies.

So, the next time you read about research that purports to “prove” or “predict” such-and-such about a complex phenomenon — be it the future course of economic activity or global temperatures — take a deep breath and ask these questions:

- Is the “proof” or “prediction” based on an explicit model, one that is or can be written down? (If the answer is “no,” you can confidently reject the “proof” or “prediction” without further ado.)

- Are the data underlying the model available to the public? If there is some basis for confidentiality (e.g., where the data reveal information about individuals or are derived from proprietary processes) are the data available to researchers upon the execution of confidentiality agreements?

- Are significant portions of the data reconstructed, adjusted, or represented by proxies? If the answer is “yes,” it is likely that the model was intended to yield “proofs” or “predictions” of a certain type (e.g., global temperatures are rising because of human activity).

- Are there well-documented objections to the model? (It takes only one well-founded objection to disprove a model, regardless of how many so-called scientists stand behind it.) If there are such objections, have they been answered fully, with factual evidence, or merely dismissed (perhaps with accompanying scorn)?

- Has the model been tested rigorously by researchers who are unaffiliated with the model’s developers? With what results? Are the results highly sensitive to the data underlying the model; for example, does the omission or addition of another year’s worth of data change the model or its statistical robustness? Does the model comport with observations made after the model was developed?

For two masterful demonstrations of the role of data manipulation and concealment in the debate about climate change, read Steve McIntyre’s presentation and this paper by Syun-Ichi Akasofu. For a general explanation of the sham, see this.

SCIENCE VS. SCIENTISM: STEVEN PINKER’S BALDERDASH

The examples that I’ve adduced thus far (and most of those that follow) demonstrate a mode of thought known as scientism: the application of the tools and language of science to create a pretense of knowledge.

No less a personage than Steven Pinker defends scientism in “Science Is Not Your Enemy”. Actually, Pinker doesn’t overtly defend scientism, which is indefensible; he just redefines it to mean science:

The term “scientism” is anything but clear, more of a boo-word than a label for any coherent doctrine. Sometimes it is equated with lunatic positions, such as that “science is all that matters” or that “scientists should be entrusted to solve all problems.” Sometimes it is clarified with adjectives like “simplistic,” “naïve,” and “vulgar.” The definitional vacuum allows me to replicate gay activists’ flaunting of “queer” and appropriate the pejorative for a position I am prepared to defend.

Scientism, in this good sense, is not the belief that members of the occupational guild called “science” are particularly wise or noble. On the contrary, the defining practices of science, including open debate, peer review, and double-blind methods, are explicitly designed to circumvent the errors and sins to which scientists, being human, are vulnerable.

After that slippery performance, it’s all smooth sailing — or so Pinker thinks — because all he has to do is point out all the good things about science. And if scientism=science, then scientism is good, right?

Wrong. Scientism remains indefensible, and there’s a lot of scientism in what passes for science. Pinker says this, for example:

The new sciences of the mind are reexamining the connections between politics and human nature, which were avidly discussed in Madison’s time but submerged during a long interlude in which humans were assumed to be blank slates or rational actors. Humans, we are increasingly appreciating, are moralistic actors, guided by norms and taboos about authority, tribe, and purity, and driven by conflicting inclinations toward revenge and reconciliation.

There is nothing new in this, as Pinker admits by adverting to Madison. Nor was the understanding of human nature “submerged” except in the writings of scientistic social “scientists”. We ordinary mortals were never fooled. Moreover, Pinker’s idea of scientific political science seems to be data-dredging:

With the advent of data science—the analysis of large, open-access data sets of numbers or text—signals can be extracted from the noise and debates in history and political science resolved more objectively.

As explained here, data-dredging is about as scientistic as it gets:

When enough hypotheses are tested, it is virtually certain that some falsely appear statistically significant, since every data set with any degree of randomness contains some spurious correlations. Researchers using data mining techniques if they are not careful can be easily misled by these apparently significant results, even though they are mere artifacts of random variation.

Turning to the humanities, Pinker writes:

[T]here can be no replacement for the varieties of close reading, thick description, and deep immersion that erudite scholars can apply to individual works. But must these be the only paths to understanding? A consilience with science offers the humanities countless possibilities for innovation in understanding. Art, culture, and society are products of human brains. They originate in our faculties of perception, thought, and emotion, and they cumulate [sic] and spread through the epidemiological dynamics by which one person affects others. Shouldn’t we be curious to understand these connections? Both sides would win. The humanities would enjoy more of the explanatory depth of the sciences, to say nothing of the kind of a progressive agenda that appeals to deans and donors. The sciences could challenge their theories with the natural experiments and ecologically valid phenomena that have been so richly characterized by humanists.

What on earth is Pinker talking about? This is over-the-top bafflegab worthy of Professor Irwin Corey. But because it comes from the keyboard of a noted (self-promoting) academic, we are meant to take it seriously.

Yes, art, culture, and society are products of human brains. So what? Poker is, too, and it’s a lot more amenable to explication by the mathematical tools of science. But the successful application of those tools depends on traits that are more art than science (e.g., bluffing, spotting “tells”, and avoiding “tells”).

More “explanatory depth” in the humanities means a deeper pile of B.S. Great art, literature, and music aren’t concocted formulaically. If they could be, modernism and postmodernism wouldn’t have yielded mountains of trash.

Oh, I know: It will be different next time. As if the tools of science are immune to misuse by obscurantists, relativists, and practitioners of political correctness. Tell it to those climatologists who dare to challenge the conventional wisdom about anthropogenic global warming. Tell it to the “sub-human” victims of the Third Reich’s medical experiments and gas chambers.

Pinker anticipates this kind of objection:

At a 2011 conference, [a] colleague summed up what she thought was the mixed legacy of science: the eradication of smallpox on the one hand; the Tuskegee syphilis study on the other. (In that study, another bloody shirt in the standard narrative about the evils of science, public-health researchers beginning in 1932 tracked the progression of untreated, latent syphilis in a sample of impoverished African Americans.) The comparison is obtuse. It assumes that the study was the unavoidable dark side of scientific progress as opposed to a universally deplored breach, and it compares a one-time failure to prevent harm to a few dozen people with the prevention of hundreds of millions of deaths per century, in perpetuity.

But the Tuskegee study was only a one-time failure in the sense that it was the only Tuskegee study. As a type of failure — the misuse of science (witting and unwitting) — it goes hand-in-hand with the advance of scientific knowledge. Should science be abandoned because of that? Of course not. But the hard fact is that science, qua science, is powerless against human nature.

Pinker plods on by describing ways in which science can contribute to the visual arts, music, and literary scholarship:

The visual arts could avail themselves of the explosion of knowledge in vision science, including the perception of color, shape, texture, and lighting, and the evolutionary aesthetics of faces and landscapes. Music scholars have much to discuss with the scientists who study the perception of speech and the brain’s analysis of the auditory world.

As for literary scholarship, where to begin? John Dryden wrote that a work of fiction is “a just and lively image of human nature, representing its passions and humours, and the changes of fortune to which it is subject, for the delight and instruction of mankind.” Linguistics can illuminate the resources of grammar and discourse that allow authors to manipulate a reader’s imaginary experience. Cognitive psychology can provide insight about readers’ ability to reconcile their own consciousness with those of the author and characters. Behavioral genetics can update folk theories of parental influence with discoveries about the effects of genes, peers, and chance, which have profound implications for the interpretation of biography and memoir—an endeavor that also has much to learn from the cognitive psychology of memory and the social psychology of self-presentation. Evolutionary psychologists can distinguish the obsessions that are universal from those that are exaggerated by a particular culture and can lay out the inherent conflicts and confluences of interest within families, couples, friendships, and rivalries that are the drivers of plot.

I wonder how Rembrandt and the Impressionists (among other pre-moderns) managed to create visual art of such evident excellence without relying on the kinds of scientific mechanisms invoked by Pinker. I wonder what music scholars would learn about excellence in composition that isn’t already evident in the general loathing of audiences for most “serious” modern and contemporary music.

As for literature, great writers know instinctively and through self-criticism how to tell stories that realistically depict character, social psychology, culture, conflict, and all the rest. Scholars (and critics), at best, can acknowledge what rings true and has dramatic or comedic merit. Scientistic pretensions in scholarship (and criticism) may result in promotions and raises for the pretentious, but they do not add to the sum of human enjoyment — which is the real test of literature.

Pinker inveighs against critics of scientism (science, in Pinker’s vocabulary) who cry “reductionism” and “simplification”. With respect to the former, Pinker writes:

Demonizers of scientism often confuse intelligibility with a sin called reductionism. But to explain a complex happening in terms of deeper principles is not to discard its richness. No sane thinker would try to explain World War I in the language of physics, chemistry, and biology as opposed to the more perspicuous language of the perceptions and goals of leaders in 1914 Europe. At the same time, a curious person can legitimately ask why human minds are apt to have such perceptions and goals, including the tribalism, overconfidence, and sense of honor that fell into a deadly combination at that historical moment.

It is reductionist to explain a complex happening in terms of a deeper principle when that principle fails to account for the complex happening. Pinker obscures that essential point by offering a silly and irrelevant example about World War I. This bit of misdirection is unsurprising, given Pinker’s foray into reductionism, The Better Angels of Our Nature: Why Violence Has Declined, discussed later.

As for simplification, Pinker says:

The complaint about simplification is misbegotten. To explain something is to subsume it under more general principles, which always entails a degree of simplification. Yet to simplify is not to be simplistic.

Pinker again dodges the issue. Simplification is simplistic when the “general principles” fail to account adequately for the phenomenon in question.

Much of the problem arises because of a simple fact that is too often overlooked: Scientists, for the most part, are human beings with a particular aptitude for pattern-seeking and the manipulation of abstract ideas. They can easily get lost in such pursuits and fail to notice that their abstractions have taken them a long way from reality (e.g., Einstein’s special theory of relativity).

In sum, scientists are human and fallible. It is in the best tradition of science to distrust their scientific claims and to dismiss their non-scientific utterances.

ECONOMICS: PHYSICS ENVY AT WORK

Economics is rife with balderdash cloaked in mathematics. Economists who rely heavily on mathematics like to say (and perhaps even believe) that mathematical expression is more precise than mere words. But, as Arnold Kling points out in “An Important Emerging Economic Paradigm”, mathematical economics is a language of faux precision, which is useful only when applied to well defined, narrow problems. It can’t address the big issues — such as economic growth — which depend on variables such as the rule of law and social norms which defy mathematical expression and quantification.

I would go a step further and argue that mathematical economics borders on obscurantism. It’s a cult whose followers speak an arcane language not only to communicate among themselves but to obscure the essentially bankrupt nature of their craft from others. Mathematical expression actually hides the assumptions that underlie it. It’s far easier to identify and challenge the assumptions of “literary” economics than it is to identify and challenge the assumptions of mathematical economics.

I daresay that this is true even for persons who are conversant in mathematics. They may be able to manipulate easily the equations of mathematical economics, but they are able to do so without grasping the deeper meanings — the assumptions and complexities — hidden by those equations. In fact, the ease of manipulating the equations gives them a false sense of mastery of the underlying, real concepts.

Much of the economics profession is nevertheless dedicated to the protection and preservation of the essential incompetence of mathematical economists. This is from “An Important Emerging Economic Paradigm”:

One of the best incumbent-protection rackets going today is for mathematical theorists in economics departments. The top departments will not certify someone as being qualified to have an advanced degree without first subjecting the student to the most rigorous mathematical economic theory. The rationale for this is reminiscent of fraternity hazing. “We went through it, so should they.”

Mathematical hazing persists even though there are signs that the prestige of math is on the decline within the profession. The important Clark Medal, awarded to the most accomplished American economist under the age of 40, has not gone to a mathematical theorist since 1989.

These hazing rituals can have real consequences. In medicine, the controversial tradition of long work hours for medical residents has come under scrutiny over the last few years. In economics, mathematical hazing is not causing immediate harm to medical patients. But it probably is working to the long-term detriment of the profession.

The hazing ritual in economics has as least two real and damaging consequences. First, it discourages entry into the economics profession by persons who, like Kling, can discuss economic behavior without resorting to the sterile language of mathematics. Second, it leads to economics that’s irrelevant to the real world — and dead wrong.

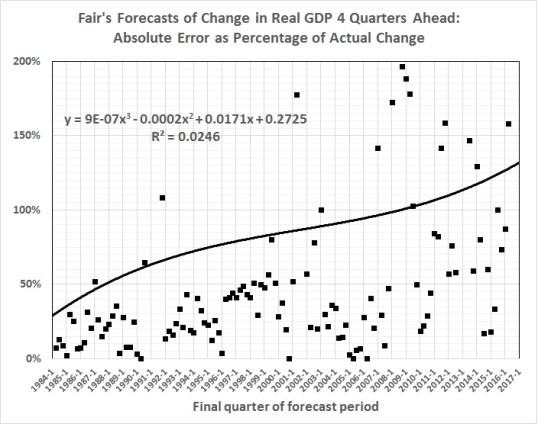

How wrong? Economists are notoriously bad at constructing models that adequately predict near-term changes in GDP. That task should be easier than sorting out the microeconomic complexities of the labor market.

Take Professor Ray Fair, for example. Professor Fair teaches macroeconomic theory, econometrics, and macroeconometric models at Yale University. He has been plying his trade since 1968, first at Princeton, then at M.I.T., and (since 1974) at Yale. Those are big-name schools, so I assume that Prof. Fair is a big name in his field.

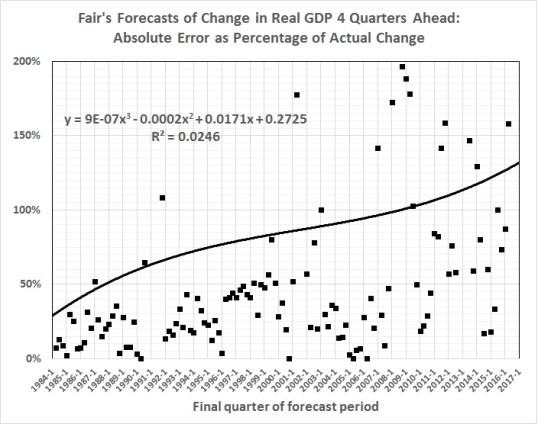

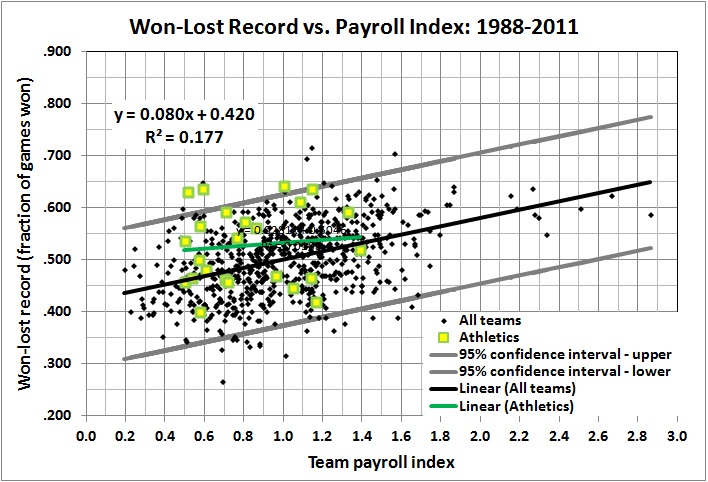

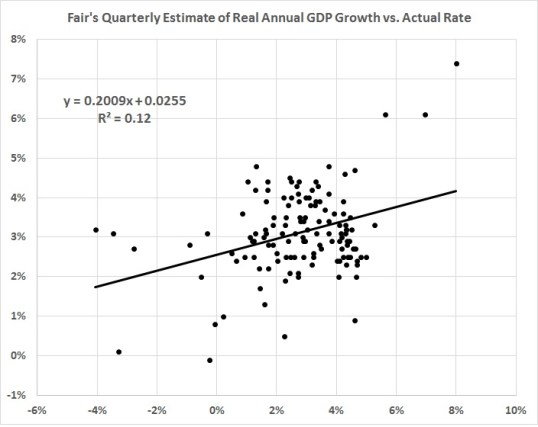

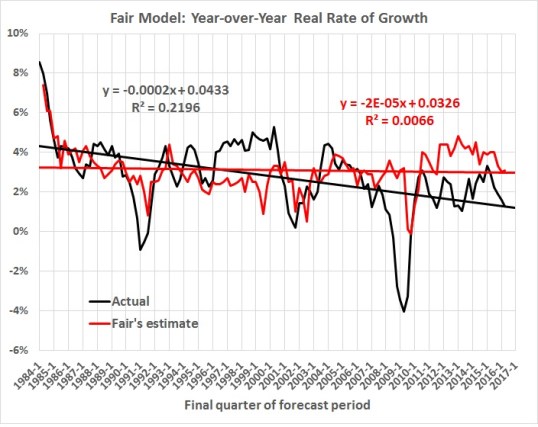

Well, since 1983, Prof. Fair has been forecasting changes in real GDP over the next four quarters. He has made 80 such forecasts based on a model that he has undoubtedly tweaked over the years. The current model is here. His forecasting track record is here. How has he done? Here’s how:

1. The median absolute error of his forecasts is 30 percent.

2. The mean absolute error of his forecasts is 70 percent.

3. His forecasts are rather systematically biased: too high when real, four-quarter GDP growth is less than 4 percent; too low when real, four-quarter GDP growth is greater than 4 percent.

4. His forecasts have grown generally worse — not better — with time.

Prof. Fair is still at it. And his forecasts continue to grow worse with time:

This and later graphs pertaining to Prof. Fair’s forecasts were derived from The Forecasting Record of the U.S. Model, Table 4: Predicted and Actual Values for Four-Quarter Real Growth, at Prof. Fair’s website. The vertical axis of this graph is truncated for ease of viewing; 8 percent of the errors exceed 200 percent.

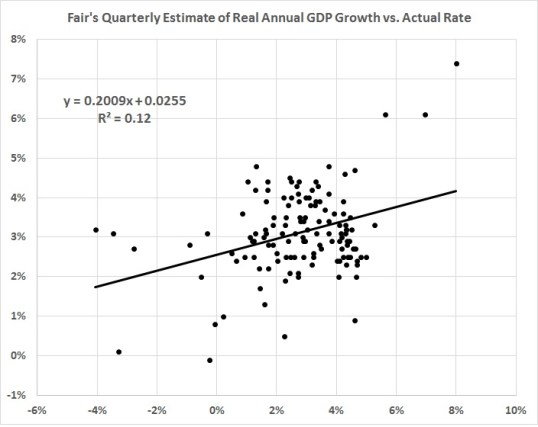

You might think that Fair’s record reflects the persistent use of a model that’s too simple to capture the dynamics of a multi-trillion-dollar economy. But you’d be wrong. The model changes quarterly. This page lists changes only since late 2009; there are links to archives of earlier versions, but those are password-protected.

As for simplicity, the model is anything but simple. For example, go to Appendix A: The U.S. Model: July 29, 2016, and you’ll find a six-sector model comprising 188 equations and hundreds of variables.

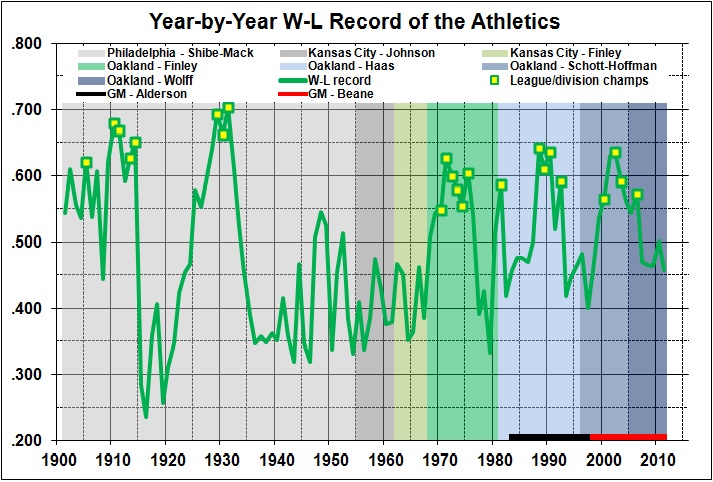

And what does that get you? A weak predictive model:

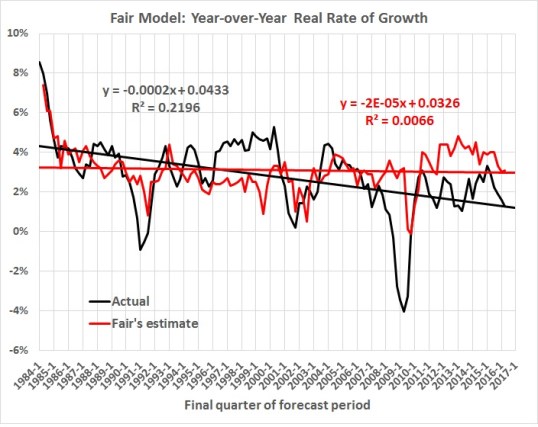

It fails the most important test; that is, it doesn’t reflect the downward trend in economic growth:

THE INVISIBLE ELEPHANT IN THE ROOM

Professor Fair and his prognosticating ilk are pikers compared with John Maynard Keynes and his disciples. The Keynesian multiplier is the fraud of all frauds, not just in economics but in politics, where it is too often invoked as an excuse for taking money from productive uses and pouring it down the rathole of government spending.

The Keynesian (fiscal) multiplier is defined as

the ratio of a change in national income to the change in government spending that causes it. More generally, the exogenous spending multiplier is the ratio of a change in national income to any autonomous change in spending (private investment spending, consumer spending, government spending, or spending by foreigners on the country’s exports) that causes it.

The multiplier is usually invoked by pundits and politicians who are anxious to boost government spending as a “cure” for economic downturns. What’s wrong with that? If government spends an extra $1 to employ previously unemployed resources, why won’t that $1 multiply and become $1.50, $1.60, or even $5 worth of additional output?

What’s wrong is the phony math by which the multiplier is derived, and the phony story that was long ago concocted to explain the operation of the multiplier. Please go to “The Keynesian Multiplier: Fiction vs. Fact” for a detailed explanation of the phony math and a derivation of the true multiplier, which is decidedly negative. Here’s the short version:

- The phony math involves the use of an accounting identity that can be manipulated in many ways, to “prove” many things. But the accounting identity doesn’t express an operational (or empirical) relationship between a change in government spending and a change in GDP.

- The true value of the multiplier isn’t 5 (a common mathematical estimate), 1.5 (a common but mistaken empirical estimate used for government purposes), or any positive number. The true value represents the negative relationship between the change in government spending (including transfer payments) as a fraction of GDP and the change in the rate of real GDP growth. Specifically, where F represents government spending as a fraction of GDP,

a rise in F from 0.24 to 0.33 (the actual change from 1947 to 2007) would reduce the real rate of economic growth by 0.031 percentage points. The real rate of growth from 1947 to 1957 was 4 percent. Other things being the same, the rate of growth would have dropped to 0.9 percent in the period 2008-2017. It actually dropped to 1.4 percent, which is within the standard error of the estimate.

- That kind of drop makes a huge difference in the incomes of Americans. In 10 years, rise GDP rises by almost 50 percent when the rate of growth is 4 percent, but only by 15 percent when the rate of growth is 1.9 percent. Think of the tens of millions of people who would be living in comfort rather than squalor were it not for Keynesian balderdash, which turns reality on its head in order to promote big government.

MANAGEMENT “SCIENCE”

A hot new item in management “science” a few years ago was the Candle Problem. Graham Morehead describes the problem and discusses its broader, “scientifically” supported conclusions:

The Candle Problem was first presented by Karl Duncker. Published posthumously in 1945, “On problem solving” describes how Duncker provided subjects with a candle, some matches, and a box of tacks. He told each subject to affix the candle to a cork board wall in such a way that when lit, the candle won’t drip wax on the table below (see figure at right). Can you think of the answer?

The only answer that really works is this: 1.Dump the tacks out of the box, 2.Tack the box to the wall, 3.Light the candle and affix it atop the box as if it were a candle-holder. Incidentally, the problem was much easier to solve if the tacks weren’t in the box at the beginning. When the tacks were in the box the participant saw it only as a tack-box, not something they could use to solve the problem. This phenomenon is called “Functional fixedness.”

Sam Glucksberg added a fascinating twist to this finding in his 1962 paper, “Influece of strength of drive on functional fixedness and perceptual recognition.” (Journal of Experimental Psychology 1962. Vol. 63, No. 1, 36-41). He studied the effect of financial incentives on solving the candle problem. To one group he offered no money. To the other group he offered an amount of money for solving the problem fast.

Remember, there are two candle problems. Let the “Simple Candle Problem” be the one where the tacks are outside the box — no functional fixedness. The solution is straightforward. Here are the results for those who solved it:

Simple Candle Problem Mean Times :

- WITHOUT a financial incentive : 4.99 min

- WITH a financial incentive : 3.67 min

Nothing unexpected here. This is a classical incentivization effect anybody would intuitively expect.

Now, let “In-Box Candle Problem” refer to the original description where the tacks start off in the box.

In-Box Candle Problem Mean Times :

- WITHOUT a financial incentive : 7:41 min

- WITH a financial incentive : 11:08 min

How could this be? The financial incentive made people slower? It gets worse — the slowness increases with the incentive. The higher the monetary reward, the worse the performance! This result has been repeated many times since the original experiment.

Glucksberg and others have shown this result to be highly robust. Daniel Pink calls it a legally provable “fact.” How should we interpret the above results?

When your employees have to do something straightforward, like pressing a button or manning one stage in an assembly line, financial incentives work. It’s a small effect, but they do work. Simple jobs are like the simple candle problem.

However, if your people must do something that requires any creative or critical thinking, financial incentives hurt. The In-Box Candle Problem is the stereotypical problem that requires you to think “Out of the Box,” (you knew that was coming, didn’t you?). Whenever people must think out of the box, offering them a monetary carrot will keep them in that box.

A monetary reward will help your employees focus. That’s the point. When you’re focused you are less able to think laterally. You become dumber. This is not the kind of thing we want if we expect to solve the problems that face us in the 21st century.

All of this is found in a video (to which Morehead links), wherein Daniel Pink (an author and journalist whose actual knowledge of science and business appears to be close to zero) expounds the lessons of the Candle Problem. Pink displays his (no-doubt-profitable) conviction that the Candle Problem and related “science” reveals (a) the utter bankruptcy of capitalism and (b) the need to replace managers with touchy-feely gurus (like himself, I suppose). That Pink has worked for two of the country’s leading anti-capitalist airheads — Al Gore and Robert Reich — should tell you all that you need to know about Pink’s real agenda.

Here are my reasons for sneering at Pink and his ilk:

1. I have been there and done that. That is to say, as a manager, I lived through (and briefly bought into) the touchy-feely fads of the ’80s and ’90s. Think In Search of Excellence, The One Minute Manager, The Seven Habits of Highly Effective People, and so on. What did anyone really learn from those books and the lectures and workshops based on them? A perceptive person would have learned that it is easy to make up plausible stories about the elements of success, and having done so, it is possible to make a lot of money peddling those stories. But the stories are flawed because (a) they are based on exceptional cases; (b) they attribute success to qualitative assessments of behaviors that seem to be present in those exceptional cases; and (c) they do not properly account for the surrounding (and critical) circumstances that really led to success, among which are luck and rare combinations of personal qualities (e.g., high intelligence, perseverance, people-reading skills). In short, Pink and his predecessors are guilty of reductionism and the post hoc ergo propter hoc fallacy.

2. Also at work is an undue generalization about the implications of the Candle Problem. It may be true that workers will perform better — at certain kinds of tasks (very loosely specified) — if they are not distracted by incentives that are related to the performance of those specific tasks. But what does that have to do with incentives in general? Not much, because the Candle Problem is unlike any work situation that I can think of. Tasks requiring creativity are not performed under deadlines of a few minutes; tasks requiring creativity are (usually) assigned to persons who have demonstrated a creative flair, not to randomly picked subjects; most work, even in this day, involves the routine application of protocols and tools that were designed to produce a uniform result of acceptable quality; it is the design of protocols and tools that requires creativity, and that kind of work is not done under the kind of artificial constraints found in the Candle Problem.

3. The Candle Problem, with its anti-incentive “lesson”, is therefore inapplicable to the real world, where incentives play a crucial and positive role:

- The profit incentive leads firms to invest resources in the development and/or production of things that consumers are willing to buy because those things satisfy wants at the right price.

- Firms acquire resources to develop and produce things by bidding for those resources, that is, by offering monetary incentives to attract the resources required to make the things that consumers are willing to buy.

- The incentives (compensation) offered to workers of various kinds (from scientists with doctorates to burger-flippers) are generally commensurate with the contributions made by those workers to the production of things of value to consumers, and to the value placed on those things by consumers.

- Workers agree to the terms and conditions of employment (including compensation) before taking a job. The incentive for most workers is to keep a job by performing adequately over a sustained period — not by demonstrating creativity in a few minutes. Some workers (but not a large fraction of them) are striving for performance-based commissions, bonuses, and profit-sharing distributions. But those distributions are based on performance over a sustained period, during which the striving workers have plenty of time to think about how they can perform better.

- Truly creative work is done, for the most part, by persons who are hired for such work on the basis of their credentials (education, prior employment, test results). Their compensation is based on their credentials, initially, and then on their performance over a sustained period. If they are creative, they have plenty of psychological space in which to exercise and demonstrate their creativity.

- On-the-job creativity — the improvement of protocols and tools by workers using them — does not occur under conditions of the kind assumed in the Candle Problem. Rather, on-the-job creativity flows from actual work and insights about how to do the work better. It happens when it happens, and has nothing to do with artificial time constraints and monetary incentives to be “creative” within those constraints.

- Pink’s essential pitch is that incentives can be replaced by offering jobs that yield autonomy (self-direction), mastery (the satisfaction of doing difficult things well), and purpose (that satisfaction of contributing to the accomplishment of something important). Well, good luck with that, but I (and millions of other consumers) want what we want, and if workers want to make a living they will just have to provide what we want, not what turns them on. Yes, there is a lot to be said for autonomy, mastery, and purpose, but there is also a lot to be said for getting a paycheck. And, contrary to Pink’s implication, getting a paycheck does not rule out autonomy, mastery, and purpose — where those happen to go with the job.

Pink and company’s “insights” about incentives and creativity are 180 degrees off-target. McDonald’s could use the Candle Problem to select creative burger-flippers who will perform well under tight deadlines because their compensation is unrelated to the creativity of their burger-flipping. McDonald’s customers should be glad that McDonald’s has taken creativity out of the picture by reducing burger-flipping to the routine application of protocols and tools.

In summary:

- The Candle Problem is an interesting experiment, and probably valid with respect to the performance of specific tasks against tight deadlines. I think the results apply whether the stakes are money or any other kind of prize. The experiment illustrates the “choke” factor, and nothing more profound than that.

- I question whether the experiment applies to the usual kind of incentive (e.g., a commissions or bonus), where the “incentee” has ample time (months, years) for reflection and research that will enable him to improve his performance and attain a bigger commission or bonus (which usually isn’t an all-or-nothing arrangement).

- There’s also the dissimilarity of the Candle Problem — which involves more-or-less randomly chosen subjects, working against an artificial deadline — and actual creative thinking — usually involving persons who are experts (even if the expertise is as mundane as ditch-digging), working against looser deadlines or none at all.

PARTISAN POLITICS IN THE GUISE OF PSEUDO-SCIENCE

There’s plenty of it to go around, but this one is a whopper. Peter Singer outdoes his usual tendentious self in this review of Steven Pinker’s The Better Angels of Our Nature: Why Violence Has Declined. In the course of the review, Singer writes:

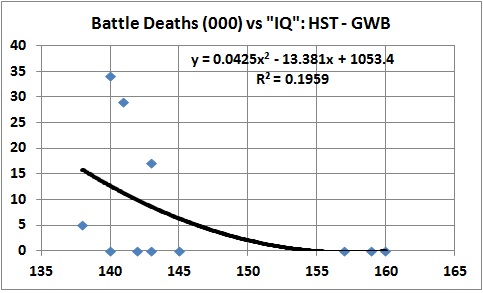

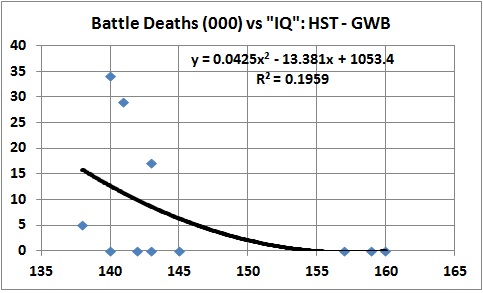

Pinker argues that enhanced powers of reasoning give us the ability to detach ourselves from our immediate experience and from our personal or parochial perspective, and frame our ideas in more abstract, universal terms. This in turn leads to better moral commitments, including avoiding violence. It is just this kind of reasoning ability that has improved during the 20th century. He therefore suggests that the 20th century has seen a “moral Flynn effect, in which an accelerating escalator of reason carried us away from impulses that lead to violence” and that this lies behind the long peace, the new peace, and the rights revolution. Among the wide range of evidence he produces in support of that argument is the tidbit that since 1946, there has been a negative correlation between an American president’s I.Q. and the number of battle deaths in wars involving the United States.

Singer does not give the source of the IQ estimates on which Pinker relies, but the supposed correlation points to a discredited piece of historiometry by Dean Keith Simonton, Simonton jumps through various hoops to assess the IQs of every president from Washington to Bush II — to one decimal place. That is a feat on a par with reconstructing the final thoughts of Abel, ere Cain slew him.

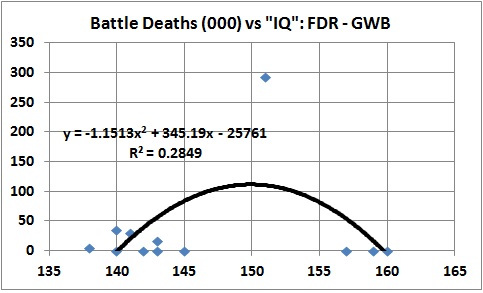

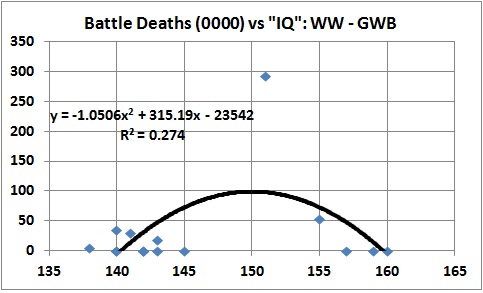

Before I explain the discrediting of Simonton’s obviously discreditable “research”, there is some fun to be had with the Pinker-Singer story of presidential IQ (Simonton-style) for battle deaths. First, of course, there is the convenient cutoff point of 1946. Why 1946? Well, it enables Pinker-Singer to avoid the inconvenient fact that the Civil War, World War I, and World War II happened while the presidency was held by three men who (in Simonton’s estimation) had high IQs: Lincoln, Wilson, and FDR.

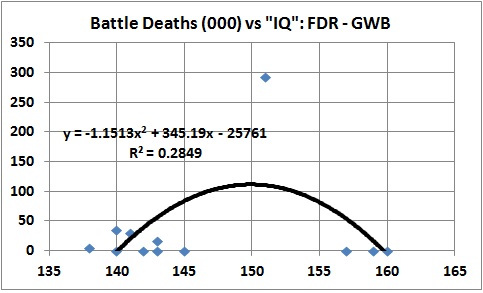

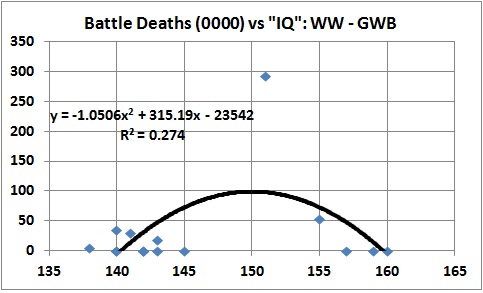

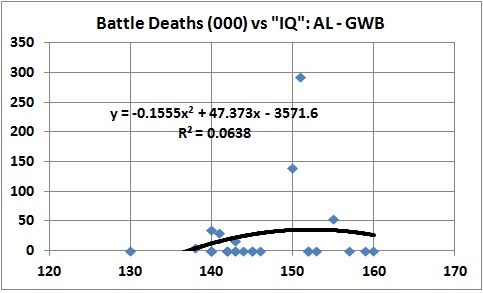

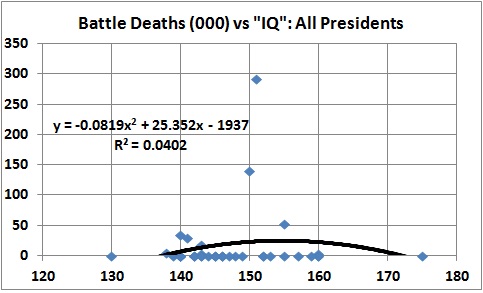

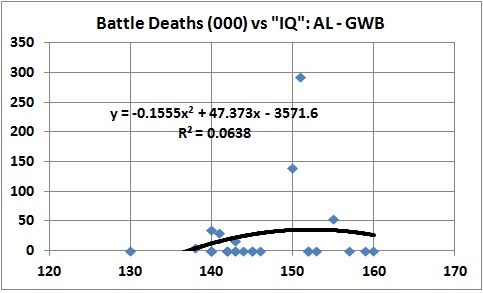

The next several graphs depict best-fit relationships between Simonton’s estimates of presidential IQ and the U.S. battle deaths that occurred during each president’s term of office.* The presidents, in order of their appearance in the titles of the graphs are Harry S Truman (HST), George W. Bush (GWB), Franklin Delano Roosevelt (FDR), (Thomas) Woodrow Wilson (WW), Abraham Lincoln (AL), and George Washington (GW). The number of battle deaths is rounded to the nearest thousand, so that the prevailing value is 0, even in the case of the Spanish-American War (385 U.S. combat deaths) and George H.W. Bush’s Gulf War (147 U.S. combat deaths).

This is probably the relationship referred to by Singer, though Pinker may show a linear fit, rather than the tighter polynomial fit used here:

It looks bad for the low “IQ” presidents — if you believe Simonton’s estimates of IQ, which you shouldn’t, and if you believe that battle deaths are a bad thing per se, which they aren’t. I will come back to those points. For now, just suspend your well-justified disbelief.

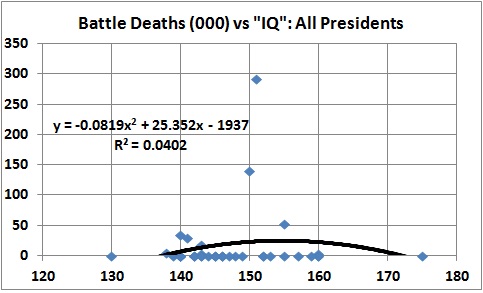

If the relationship for the HST-GWB era were statistically meaningful, it would not change much with the introduction of additional statistics about “IQ” and battle deaths, but it does:

If you buy the brand of snake oil being peddled by Pinker-Singer, you must believe that the “dumbest” and “smartest” presidents are unlikely to get the U.S. into wars that result in a lot of battle deaths, whereas some (but, mysteriously, not all) of the “medium-smart” presidents (Lincoln, Wilson, FDR) are likely to do so.

In any event, if you believe in Pinker-Singer’s snake oil, you must accept the consistent “humpback” relationship that is depicted in the preceding four graphs, rather than the highly selective, one-shot negative relationship of the HST-GWB graph.

More seriously, the relationship in the HST-GWB graph is an evident ploy to discredit certain presidents (especially GWB, I suspect), which is why it covers only the period since WWII. Why not just say that you think GWB is a chimp-like, war-mongering, moron and be done with it? Pseudo-statistics of the kind offered up by Pinker-Singer is nothing more than a talking point for those already convinced that Bush=Hitler.

But as long as this silly game is in progress, let us continue it, with a new rule. Let us advance from one to two explanatory variables. The second explanatory variable that strongly suggests itself is political party. And because it is not good practice to omit relevant statistics (a favorite gambit of liars), I estimated an equation based on “IQ” and battle deaths for the 27 men who served as president from the first Republican presidency (Lincoln’s) through the presidency of GWB. The equation looks like this:

U.S. battle deaths (000) “owned” by a president =

-80.6 + 0.841 x “IQ” – 31.3 x party (where 0 = Dem, 1 = GOP)

In other words, battle deaths rise at the rate of 841 per IQ point (so much for Pinker-Singer). But there will be fewer deaths with a Republican in the White House (so much for Pinker-Singer’s implied swipe at GWB).

All of this is nonsense, of course, for two reasons: Simonton’s estimates of IQ are hogwash, and the number of U.S. battle deaths is a meaningless number, taken by itself.

With regard to the hogwash, Simonton’s estimates of presidents’ IQs put every one of them — including the “dumbest,” U.S. Grant — in the top 2.3 percent of the population. And the mean of Simonton’s estimates puts the average president in the top 0.1 percent (one-tenth of one percent) of the population. That is literally incredible. Good evidence of the unreliability of Simonton’s estimates is found in an entry by Thomas C. Reeves at George Mason University’s History New Network. Reeves is the author of A Question of Character: A Life of John F. Kennedy, the negative reviews of which are evidently the work of JFK idolators who refuse to be disillusioned by facts. Anyway, here is Reeves:

I’m a biographer of two of the top nine presidents on Simonton’s list and am highly familiar with the histories of the other seven. In my judgment, this study has little if any value. Let’s take JFK and Chester A. Arthur as examples.

Kennedy was actually given an IQ test before entering Choate. His score was 119…. There is no evidence to support the claim that his score should have been more than 40 points higher [i.e., the IQ of 160 attributed to Kennedy by Simonton]. As I described in detail in A Question Of Character [link added], Kennedy’s academic achievements were modest and respectable, his published writing and speeches were largely done by others (no study of Kennedy is worthwhile that downplays the role of Ted Sorensen)….

Chester Alan Arthur was largely unknown before my Gentleman Boss was published in 1975. The discovery of many valuable primary sources gave us a clear look at the president for the first time. Among the most interesting facts that emerged involved his service during the Civil War, his direct involvement in the spoils system, and the bizarre way in which he was elevated to the GOP presidential ticket in 1880. His concealed and fatal illness while in the White House also came to light.

While Arthur was a college graduate, and was widely considered to be a gentleman, there is no evidence whatsoever to suggest that his IQ was extraordinary. That a psychologist can rank his intelligence 2.3 points ahead of Lincoln’s suggests access to a treasure of primary sources from and about Arthur that does not exist.

This historian thinks it impossible to assign IQ numbers to historical figures. If there is sufficient evidence (as there usually is in the case of American presidents), we can call people from the past extremely intelligent. Adams, Wilson, TR, Jefferson, and Lincoln were clearly well above average intellectually. But let us not pretend that we can rank them by tenths of a percentage point or declare that a man in one era stands well above another from a different time and place.

My educated guess is that this recent study was designed in part to denigrate the intelligence of the current occupant of the White House….

That is an excellent guess.

The meaninglessness of battle deaths as a measure of anything — but battle deaths — should be evident. But in case it is not evident, here goes:

- Wars are sometimes necessary, sometimes not. (I give my views about the wisdom of America’s various wars at this post.) Necessary or not, presidents usually act in accordance with popular and elite opinion about the desirability of a particular war. Imagine, for example, the reaction if FDR had not gone to Congress on December 8, 1941, to ask for a declaration of war against Japan, or if GWB had not sought the approval of Congress for action in Afghanistan.

- Presidents may have a lot to do with the decision to enter a war, but they have little to do with the external forces that help to shape that decision. GHWB, for example, had nothing to do with Saddam’s decision to invade Kuwait and thereby threaten vital U.S. interests in the Middle East. GWB, to take another example, was not a party to the choices of earlier presidents (GHWB and Clinton) that enabled Saddam to stay in power and encouraged Osama bin Laden to believe that America could be brought to its knees by a catastrophic attack.

- The number of battle deaths in a war depends on many things outside the control of a particular president; for example, the size and capabilities of enemy forces, the size and capabilities of U.S. forces (which have a lot to do with the decisions of earlier administrations and Congresses), and the scope and scale of a war (again, largely dependent on the enemy).

- Battle deaths represent personal tragedies, but — in and of themselves — are not a measure of a president’s wisdom or acumen. Whether the deaths were in vain is a separate issue that depends on the aforementioned considerations. To use battle deaths as a single, negative measure of a president’s ability is rank cynicism — the rankness of which is revealed in Pinker’s decision to ignore Lincoln and FDR and their “good” but deadly wars.

To put the last point another way, if the number of battle death deaths is a bad thing, Lincoln and FDR should be rotting in hell for the wars that brought an end to slavery and Hitler.

__________

* The numbers of U.S. battle deaths, by war, are available at infoplease.com, “America’s Wars: U.S. Casualties and Veterans”. The deaths are “assigned” to presidents as follows (numbers in parentheses indicate thousands of deaths):

All of the deaths (2) in the War of 1812 occurred on Madison’s watch.

All of the deaths (2) in the Mexican-American War occurred on Polk’s watch.

I count only Union battle deaths (140) during the Civil War; all are “Lincoln’s.” Let the Confederate dead be on the head of Jefferson Davis. This is a gift, of sorts, to Pinker-Singer because if Confederate dead were counted as Lincoln, with his high “IQ,” it would make Pinker-Singer’s hypothesis even more ludicrous than it is.

WW is the sole “owner” of WWI battle deaths (53).

Some of the U.S. battle deaths in WWII (292) occurred while HST was president, but Truman was merely presiding over the final months of a war that was almost won when FDR died. Truman’s main role was to hasten the end of the war in the Pacific by electing to drop the A-bombs on Hiroshima and Nagasaki. So FDR gets “credit” for all WWII battle deaths.

The Korean War did not end until after Eisenhower succeeded Truman, but it was “Truman’s war,” so he gets “credit” for all Korean War battle deaths (34). This is another “gift” to Pinker-Singer because Ike’s “IQ” is higher than Truman’s.

Vietnam was “LBJ’s war,” but I’m sure that Singer would not want Nixon to go without “credit” for the battle deaths that occurred during his administration. Moreover, LBJ had effectively lost the Vietnam war through his gradualism, but Nixon chose nevertheless to prolong the agony. So I have shared the “credit” for Vietnam War battle deaths between LBJ (deaths in 1965-68: 29) and RMN (deaths in 1969-73: 17). To do that, I apportioned total Vietnam War battle deaths, as given by infoplease.com, according to the total number of U.S. deaths in each year of the war, 1965-1973.

The wars in Afghanistan and Iraq are “GWB’s wars,” even though Obama has continued them. So I have “credited” GWB with all the battle deaths in those wars, as of May 27, 2011 (5).

The relative paucity of U.S. combat deaths in other post-WWII actions (e.g., Lebanon, Somalia, Persian Gulf) is attested to by “Post-Vietnam Combat Casualties”, at infoplease.com.

A THIRD APPEARANCE BY PINKER

Steven Pinker, whose ignominious outpourings I have addressed twice here, deserves a third strike (which he shall duly be awarded). Pinker’s The Better Angels of Our Nature is cited gleefully by leftists and cockeyed optimists as evidence that human beings, on the whole, are becoming kinder and gentler because of:

- The Leviathan – The rise of the modern nation-state and judiciary “with a monopoly on the legitimate use of force,” which “can defuse the [individual] temptation of exploitative attack, inhibit the impulse for revenge, and circumvent…self-serving biases.”

- Commerce – The rise of “technological progress [allowing] the exchange of goods and services over longer distances and larger groups of trading partners,” so that “other people become more valuable alive than dead” and “are less likely to become targets of demonization and dehumanization”;

- Feminization – Increasing respect for “the interests and values of women.”

- Cosmopolitanism – the rise of forces such as literacy, mobility, and mass media, which “can prompt people to take the perspectives of people unlike themselves and to expand their circle of sympathy to embrace them”;

- The Escalator of Reason – an “intensifying application of knowledge and rationality to human affairs,” which “can force people to recognize the futility of cycles of violence, to ramp down the privileging of their own interests over others’, and to reframe violence as a problem to be solved rather than a contest to be won.”

I can tell you that Pinker’s book is hogwash because two very bright leftists — Peter Singer and Will Wilkinson — have strongly and wrongly endorsed some of its key findings. I dispatched Singer in earlier. As for Wilkinson, he praises statistics adduced by Pinker that show a decline in the use of capital punishment:

In the face of such a decisive trend in moral culture, we can say a couple different things. We can say that this is just change and says nothing in particular about what is really right or wrong, good or bad. Or we can take take say this is evidence of moral progress, that we have actually become better. I prefer the latter interpretation for basically the same reasons most of us see the abolition of slavery and the trend toward greater equality between races and sexes as progress and not mere morally indifferent change. We can talk about the nature of moral progress later. It’s tricky. For now, I want you to entertain the possibility that convergence toward the idea that execution is wrong counts as evidence that it is wrong.

I would count convergence toward the idea that execution is wrong as evidence that it is wrong, if that idea were (a) increasingly held by individuals who (b) had arrived at their “enlightenment” unnfluenced by operatives of the state (legislatures and judges), who take it upon themselves to flout popular support of the death penalty. What we have, in the case of the death penalty, is moral regress, not moral progress.

Moral regress because the abandonment of the death penalty puts innocent lives at risk. Capital punishment sends a message, and the message is effective when it is delivered: it deters homicide. And even if it didn’t, it would at least remove killers from our midst, permanently. By what standard of morality can one claim that it is better to spare killers than to protect innocents? For that matter, by what standard of morality is it better to kill innocents in the womb than to spare killers? Proponents of abortion (like Singer and Wilkinson) — who by and large oppose capital punishment — are completely lacking in moral authority.

Returning to Pinker’s thesis that violence has declined, I quote a review at Foseti:

Pinker’s basic problem is that he essentially defines “violence” in such a way that his thesis that violence is declining becomes self-fulling. “Violence” to Pinker is fundamentally synonymous with behaviors of older civilizations. On the other hand, modern practices are defined to be less violent than newer practices.

A while back, I linked to a story about a guy in my neighborhood who’s been arrested over 60 times for breaking into cars. A couple hundred years ago, this guy would have been killed for this sort of vandalism after he got caught the first time. Now, we feed him and shelter him for a while and then we let him back out to do this again. Pinker defines the new practice as a decline in violence – we don’t kill the guy anymore! Someone from a couple hundred years ago would be appalled that we let the guy continue destroying other peoples’ property without consequence. In the mind of those long dead, “violence” has in fact increased. Instead of a decline in violence, this practice seems to me like a decline in justice – nothing more or less.

Here’s another example, Pinker uses creative definitions to show that the conflicts of the 20th Century pale in comparison to previous conflicts. For example, all the Mongol Conquests are considered one event, even though they cover 125 years. If you lump all these various conquests together and you split up WWI, WWII, Mao’s takeover in China, the Bolshevik takeover of Russia, the Russian Civil War, and the Chinese Civil War (yes, he actually considers this a separate event from Mao), you unsurprisingly discover that the events of the 20th Century weren’t all that violent compared to events in the past! Pinker’s third most violent event is the “Mideast Slave Trade” which he says took place between the 7th and 19th Centuries. Seriously. By this standard, all the conflicts of the 20th Century are related. Is the Russian Revolution or the rise of Mao possible without WWII? Is WWII possible without WWI? By this consistent standard, the 20th Century wars of Communism would have seen the worst conflict by far. Of course, if you fiddle with the numbers, you can make any point you like.

There’s much more to the review, including some telling criticisms of Pinker’s five reasons for the (purported) decline in violence. That the reviewer somehow still wants to believe in the rightness of Pinker’s thesis says more about the reviewer’s optimism than it does about the validity of Pinker’s thesis.

That thesis is fundamentally flawed, as Robert Epstein points out in a review at Scientific American:

[T]he wealth of data [Pinker] presents cannot be ignored—unless, that is, you take the same liberties as he sometimes does in his book. In two lengthy chapters, Pinker describes psychological processes that make us either violent or peaceful, respectively. Our dark side is driven by a evolution-based propensity toward predation and dominance. On the angelic side, we have, or at least can learn, some degree of self-control, which allows us to inhibit dark tendencies.

There is, however, another psychological process—confirmation bias—that Pinker sometimes succumbs to in his book. People pay more attention to facts that match their beliefs than those that undermine them. Pinker wants peace, and he also believes in his hypothesis; it is no surprise that he focuses more on facts that support his views than on those that do not. The SIPRI arms data are problematic, and a reader can also cherry-pick facts from Pinker’s own book that are inconsistent with his position. He notes, for example, that during the 20th century homicide rates failed to decline in both the U.S. and England. He also describes in graphic and disturbing detail the savage way in which chimpanzees—our closest genetic relatives in the animal world—torture and kill their own kind.

Of greater concern is the assumption on which Pinker’s entire case rests: that we look at relative numbers instead of absolute numbers in assessing human violence. But why should we be content with only a relative decrease? By this logic, when we reach a world population of nine billion in 2050, Pinker will conceivably be satisfied if a mere two million people are killed in war that year.

The biggest problem with the book, though, is its overreliance on history, which, like the light on a caboose, shows us only where we are not going. We live in a time when all the rules are being rewritten blindingly fast—when, for example, an increasingly smaller number of people can do increasingly greater damage. Yes, when you move from the Stone Age to modern times, some violence is left behind, but what happens when you put weapons of mass destruction into the hands of modern people who in many ways are still living primitively? What happens when the unprecedented occurs—when a country such as Iran, where women are still waiting for even the slightest glimpse of those better angels, obtains nuclear weapons? Pinker doesn’t say.

Pinker’s belief that violence is on the decline reminds me of “it’s different this time”, a phrase that was on the lips of hopeful stock-pushers, stock-buyers, and pundits during the stock-market bubble of the late 1990s. That bubble ended, of course, in the spectacular crash of 2000.

Predictions about the future of humankind are better left in the hands of writers who see human nature whole, and who are not out to prove that it can be shaped or contained by the kinds of “liberal” institutions that Pinker so obviously favors.

Consider this, from an article by Robert J. Samuelson at The Washington Post:

[T]he Internet’s benefits are relatively modest compared with previous transformative technologies, and it brings with it a terrifying danger: cyberwar. Amid the controversy over leaks from the National Security Agency, this looms as an even bigger downside.

By cyberwarfare, I mean the capacity of groups — whether nations or not — to attack, disrupt and possibly destroy the institutions and networks that underpin everyday life. These would be power grids, pipelines, communication and financial systems, business record-keeping and supply-chain operations, railroads and airlines, databases of all types (from hospitals to government agencies). The list runs on. So much depends on the Internet that its vulnerability to sabotage invites doomsday visions of the breakdown of order and trust.

In a report, the Defense Science Board, an advisory group to the Pentagon, acknowledged “staggering losses” of information involving weapons design and combat methods to hackers (not identified, but probably Chinese). In the future, hackers might disarm military units. “U.S. guns, missiles and bombs may not fire, or may be directed against our own troops,” the report said. It also painted a specter of social chaos from a full-scale cyberassault. There would be “no electricity, money, communications, TV, radio or fuel (electrically pumped). In a short time, food and medicine distribution systems would be ineffective.”

But Pinker wouldn’t count the resulting chaos as violence, as long as human beings were merely starving and dying of various diseases. That violence would ensue, of course, is another story, which is told by John Gray in The Silence of Animals: On Progress and Other Modern Myths. Gray’s book — published 18 months after Better Angels — could be read as a refutation of Pinker’s book, though Gray doesn’t mention Pinker or his book.

The gist of Gray’s argument is faithfully recounted in a review of Gray’s book by Robert W. Merry at The National Interest:

The noted British historian J. B. Bury (1861–1927) … wrote, “This doctrine of the possibility of indefinitely moulding the characters of men by laws and institutions . . . laid a foundation on which the theory of the perfectibility of humanity could be raised. It marked, therefore, an important stage in the development of the doctrine of Progress.”

We must pause here over this doctrine of progress. It may be the most powerful idea ever conceived in Western thought—emphasizing Western thought because the idea has had little resonance in other cultures or civilizations. It is the thesis that mankind has advanced slowly but inexorably over the centuries from a state of cultural backwardness, blindness and folly to ever more elevated stages of enlightenment and civilization—and that this human progression will continue indefinitely into the future…. The U.S. historian Charles A. Beard once wrote that the emergence of the progress idea constituted “a discovery as important as the human mind has ever made, with implications for mankind that almost transcend imagination.” And Bury, who wrote a book on the subject, called it “the great transforming conception, which enables history to define her scope.”

Gray rejects it utterly. In doing so, he rejects all of modern liberal humanism. “The evidence of science and history,” he writes, “is that humans are only ever partly and intermittently rational, but for modern humanists the solution is simple: human beings must in future be more reasonable. These enthusiasts for reason have not noticed that the idea that humans may one day be more rational requires a greater leap of faith than anything in religion.” In an earlier work, Straw Dogs: Thoughts on Humans and Other Animals, he was more blunt: “Outside of science, progress is simply a myth.”

… Gray has produced more than twenty books demonstrating an expansive intellectual range, a penchant for controversy, acuity of analysis and a certain political clairvoyance.

He rejected, for example, Francis Fukuyama’s heralded “End of History” thesis—that Western liberal democracy represents the final form of human governance—when it appeared in this magazine in 1989. History, it turned out, lingered long enough to prove Gray right and Fukuyama wrong….

Though for decades his reputation was confined largely to intellectual circles, Gray’s public profile rose significantly with the 2002 publication of Straw Dogs, which sold impressively and brought him much wider acclaim than he had known before. The book was a concerted and extensive assault on the idea of progress and its philosophical offspring, secular humanism. The Silence of Animals is in many ways a sequel, plowing much the same philosophical ground but expanding the cultivation into contiguous territory mostly related to how mankind—and individual humans—might successfully grapple with the loss of both metaphysical religion of yesteryear and today’s secular humanism. The fundamentals of Gray’s critique of progress are firmly established in both books and can be enumerated in summary.

First, the idea of progress is merely a secular religion, and not a particularly meaningful one at that. “Today,” writes Gray in Straw Dogs, “liberal humanism has the pervasive power that was once possessed by revealed religion. Humanists like to think they have a rational view of the world; but their core belief in progress is a superstition, further from the truth about the human animal than any of the world’s religions.”

Second, the underlying problem with this humanist impulse is that it is based upon an entirely false view of human nature—which, contrary to the humanist insistence that it is malleable, is immutable and impervious to environmental forces. Indeed, it is the only constant in politics and history. Of course, progress in scientific inquiry and in resulting human comfort is a fact of life, worth recognition and applause. But it does not change the nature of man, any more than it changes the nature of dogs or birds. “Technical progress,” writes Gray, again in Straw Dogs, “leaves only one problem unsolved: the frailty of human nature. Unfortunately that problem is insoluble.”

That’s because, third, the underlying nature of humans is bred into the species, just as the traits of all other animals are. The most basic trait is the instinct for survival, which is placed on hold when humans are able to live under a veneer of civilization. But it is never far from the surface. In The Silence of Animals, Gray discusses the writings of Curzio Malaparte, a man of letters and action who found himself in Naples in 1944, shortly after the liberation. There he witnessed a struggle for life that was gruesome and searing. “It is a humiliating, horrible thing, a shameful necessity, a fight for life,” wrote Malaparte. “Only for life. Only to save one’s skin.” Gray elaborates:

Observing the struggle for life in the city, Malaparte watched as civilization gave way. The people the inhabitants had imagined themselves to be—shaped, however imperfectly, by ideas of right and wrong—disappeared. What were left were hungry animals, ready to do anything to go on living; but not animals of the kind that innocently kill and die in forests and jungles. Lacking a self-image of the sort humans cherish, other animals are content to be what they are. For human beings the struggle for survival is a struggle against themselves.

When civilization is stripped away, the raw animal emerges. “Darwin showed that humans are like other animals,” writes Gray in Straw Dogs, expressing in this instance only a partial truth. Humans are different in a crucial respect, captured by Gray himself when he notes that Homo sapiens inevitably struggle with themselves when forced to fight for survival. No other species does that, just as no other species has such a range of spirit, from nobility to degradation, or such a need to ponder the moral implications as it fluctuates from one to the other. But, whatever human nature is—with all of its capacity for folly, capriciousness and evil as well as virtue, magnanimity and high-mindedness—it is embedded in the species through evolution and not subject to manipulation by man-made institutions.

Fourth, the power of the progress idea stems in part from the fact that it derives from a fundamental Christian doctrine—the idea of providence, of redemption….

“By creating the expectation of a radical alteration in human affairs,” writes Gray, “Christianity . . . founded the modern world.” But the modern world retained a powerful philosophical outlook from the classical world—the Socratic faith in reason, the idea that truth will make us free; or, as Gray puts it, the “myth that human beings can use their minds to lift themselves out of the natural world.” Thus did a fundamental change emerge in what was hoped of the future. And, as the power of Christian faith ebbed, along with its idea of providence, the idea of progress, tied to the Socratic myth, emerged to fill the gap. “Many transmutations were needed before the Christian story could renew itself as the myth of progress,” Gray explains. “But from being a succession of cycles like the seasons, history came to be seen as a story of redemption and salvation, and in modern times salvation became identified with the increase of knowledge and power.”

Thus, it isn’t surprising that today’s Western man should cling so tenaciously to his faith in progress as a secular version of redemption. As Gray writes, “Among contemporary atheists, disbelief in progress is a type of blasphemy. Pointing to the flaws of the human animal has become an act of sacrilege.” In one of his more brutal passages, he adds:

Humanists believe that humanity improves along with the growth of knowledge, but the belief that the increase of knowledge goes with advances in civilization is an act of faith. They see the realization of human potential as the goal of history, when rational inquiry shows history to have no goal. They exalt nature, while insisting that humankind—an accident of nature—can overcome the natural limits that shape the lives of other animals. Plainly absurd, this nonsense gives meaning to the lives of people who believe they have left all myths behind.

In the Silence of Animals, Gray explores all this through the works of various writers and thinkers. In the process, he employs history and literature to puncture the conceits of those who cling to the progress idea and the humanist view of human nature. Those conceits, it turns out, are easily punctured when subjected to Gray’s withering scrutiny….

And yet the myth of progress is so powerful in part because it gives meaning to modern Westerners struggling, in an irreligious era, to place themselves in a philosophical framework larger than just themselves….

Much of the human folly catalogued by Gray in The Silence of Animals makes a mockery of the earnest idealism of those who later shaped and molded and proselytized humanist thinking into today’s predominant Western civic philosophy.

RACE AS A SOCIAL CONSTRUCT

David Reich‘s hot new book, Who We Are and How We Got Here, is causing a stir in genetic-research circles. Reich, who takes great pains to assure everyone that he isn’t a racist, and who deplores racism, is nevertheless candid about race:

I have deep sympathy for the concern that genetic discoveries could be misused to justify racism. But as a geneticist I also know that it is simply no longer possible to ignore average genetic differences among “races.”

Groundbreaking advances in DNA sequencing technology have been made over the last two decades. These advances enable us to measure with exquisite accuracy what fraction of an individual’s genetic ancestry traces back to, say, West Africa 500 years ago — before the mixing in the Americas of the West African and European gene pools that were almost completely isolated for the last 70,000 years. With the help of these tools, we are learning that while race may be a social construct, differences in genetic ancestry that happen to correlate to many of today’s racial constructs are real….

Self-identified African-Americans turn out to derive, on average, about 80 percent of their genetic ancestry from enslaved Africans brought to America between the 16th and 19th centuries. My colleagues and I searched, in 1,597 African-American men with prostate cancer, for locations in the genome where the fraction of genes contributed by West African ancestors was larger than it was elsewhere in the genome. In 2006, we found exactly what we were looking for: a location in the genome with about 2.8 percent more African ancestry than the average.

When we looked in more detail, we found that this region contained at least seven independent risk factors for prostate cancer, all more common in West Africans. Our findings could fully account for the higher rate of prostate cancer in African-Americans than in European-Americans. We could conclude this because African-Americans who happen to have entirely European ancestry in this small section of their genomes had about the same risk for prostate cancer as random Europeans.

Did this research rely on terms like “African-American” and “European-American” that are socially constructed, and did it label segments of the genome as being probably “West African” or “European” in origin? Yes. Did this research identify real risk factors for disease that differ in frequency across those populations, leading to discoveries with the potential to improve health and save lives? Yes.

While most people will agree that finding a genetic explanation for an elevated rate of disease is important, they often draw the line there. Finding genetic influences on a propensity for disease is one thing, they argue, but looking for such influences on behavior and cognition is another.

But whether we like it or not, that line has already been crossed. A recent study led by the economist Daniel Benjamin compiled information on the number of years of education from more than 400,000 people, almost all of whom were of European ancestry. After controlling for differences in socioeconomic background, he and his colleagues identified 74 genetic variations that are over-represented in genes known to be important in neurological development, each of which is incontrovertibly more common in Europeans with more years of education than in Europeans with fewer years of education.

It is not yet clear how these genetic variations operate. A follow-up study of Icelanders led by the geneticist Augustine Kong showed that these genetic variations also nudge people who carry them to delay having children. So these variations may be explaining longer times at school by affecting a behavior that has nothing to do with intelligence.

This study has been joined by others finding genetic predictors of behavior. One of these, led by the geneticist Danielle Posthuma, studied more than 70,000 people and found genetic variations in more than 20 genes that were predictive of performance on intelligence tests.

Is performance on an intelligence test or the number of years of school a person attends shaped by the way a person is brought up? Of course. But does it measure something having to do with some aspect of behavior or cognition? Almost certainly. And since all traits influenced by genetics are expected to differ across populations (because the frequencies of genetic variations are rarely exactly the same across populations), the genetic influences on behavior and cognition will differ across populations, too.

You will sometimes hear that any biological differences among populations are likely to be small, because humans have diverged too recently from common ancestors for substantial differences to have arisen under the pressure of natural selection. This is not true. The ancestors of East Asians, Europeans, West Africans and Australians were, until recently, almost completely isolated from one another for 40,000 years or longer, which is more than sufficient time for the forces of evolution to work. Indeed, the study led by Dr. Kong showed that in Iceland, there has been measurable genetic selection against the genetic variations that predict more years of education in that population just within the last century….

So how should we prepare for the likelihood that in the coming years, genetic studies will show that many traits are influenced by genetic variations, and that these traits will differ on average across human populations? It will be impossible — indeed, anti-scientific, foolish and absurd — to deny those differences. [“How Genetics Is Changing Our Understanding of ‘Race’“, The New York Times, March 23, 2018]

Reich engages in a lot of non-scientific wishful thinking about racial differences and how they should be treated by “society” — none of which is in his purview as a scientist. Reich’s forays into psychobabble have been addressed at length by Steve Sailer (here and here) and Gregory Cochran (here, here, here, here, and here). Suffice it to say that Reich is trying in vain to minimize the scientific fact of racial differences that show up crucially in intelligence and rates of violent crime.

The lesson here is that it’s all right to show that race isn’t a social construct as long as you proclaim that it is a social construct. This is known as talking out of both sides of one’s mouth — another manifestation of balderdash.

DIVERSITY IS GOOD, EXCEPT WHEN IT ISN’T

I now invoke Robert Putnam, a political scientist known mainly for his book Bowling Alone: The Collapse and Revival of American Community (2005), in which he

makes a distinction between two kinds of social capital: bonding capital and bridging capital. Bonding occurs when you are socializing with people who are like you: same age, same race, same religion, and so on. But in order to create peaceful societies in a diverse multi-ethnic country, one needs to have a second kind of social capital: bridging. Bridging is what you do when you make friends with people who are not like you, like supporters of another football team. Putnam argues that those two kinds of social capital, bonding and bridging, do strengthen each other. Consequently, with the decline of the bonding capital mentioned above inevitably comes the decline of the bridging capital leading to greater ethnic tensions.

In later work on diversity and trust within communities, Putnam concludes that

other things being equal, more diversity in a community is associated with less trust both between and within ethnic groups….

Even when controlling for income inequality and crime rates, two factors which conflict theory states should be the prime causal factors in declining inter-ethnic group trust, more diversity is still associated with less communal trust.

Lowered trust in areas with high diversity is also associated with:

- Lower confidence in local government, local leaders and the local news media.

- Lower political efficacy – that is, confidence in one’s own influence.

- Lower frequency of registering to vote, but more interest and knowledge about politics and more participation in protest marches and social reform groups.

- Higher political advocacy, but lower expectations that it will bring about a desirable result.

- Less expectation that others will cooperate to solve dilemmas of collective action (e.g., voluntary conservation to ease a water or energy shortage).

- Less likelihood of working on a community project.

- Less likelihood of giving to charity or volunteering.

- Fewer close friends and confidants.

- Less happiness and lower perceived quality of life.

- More time spent watching television and more agreement that “television is my most important form of entertainment”.

It’s not as if Putnam is a social conservative who is eager to impart such news. To the contrary, as Michal Jonas writes in “The Downside of Diversity“, Putnam’s

findings on the downsides of diversity have also posed a challenge for Putnam, a liberal academic whose own values put him squarely in the pro-diversity camp. Suddenly finding himself the bearer of bad news, Putnam has struggled with how to present his work. He gathered the initial raw data in 2000 and issued a press release the following year outlining the results. He then spent several years testing other possible explanations.

When he finally published a detailed scholarly analysis … , he faced criticism for straying from data into advocacy. His paper argues strongly that the negative effects of diversity can be remedied, and says history suggests that ethnic diversity may eventually fade as a sharp line of social demarcation.

“Having aligned himself with the central planners intent on sustaining such social engineering, Putnam concludes the facts with a stern pep talk,” wrote conservative commentator Ilana Mercer….